Technology

Specifications Aren’t Pretty…But They Are Necessary

Over 22 years ago, my first project at CableLabs was to prepare the DOCSIS® 1.0 Radio Frequency Interface (RFI) specification as a contribution to the International Telecommunication Institute-Telecom Sector (ITU-T). After working in various industries, I found the telecommunications industry to be an exciting new world. During my first week, I started a list of acronyms at the back of my notebook, and it was only the first sip of the alphabet soup I was about to devour.

A far cry from the two-page technical bulletins I previously prepared, CableLabs’ specifications were quite different. They were under strict document management control, with engineering changes (ECs) processed against issued versions in order to revise the specs. Learning the entire process took time, even with the help of great coworkers.

I also learned that CableLabs’ specifications are innovation-focused and designed to get products to market quickly. Interoperable devices that adhere to common specifications enable consumer choice, widespread deployment of new technologies, and lower per-unit cost due to industry-scale economics.

CableLabs’ specifications are driven by the collaborative working relationships between members, vendors and CableLabs’ staff within project-specific working groups. They address most aspects of cable access networks, including cable modems, set-top boxes, cable modem termination systems (CMTSs), remote-PHY and remote-MACPHY devices, optical devices, telephony and aspects of mobile base stations—all of which operators use to provide their customers with a wide range of products and service offerings.

Some advantages of current products built to our specifications include but are not limited to:

- Operator choice, in addition to price competition within a given marketplace

- Increased speed and security, as well as backward compatibility

- Advancements in network devices (e.g., wired, wireless, security) and their related functionality

- Simplicity in debugging problems in the lab/field as specifications define the expected behavior

As part of a publication support team working with strong engineering support, we recently published the DOCSIS 4.0 suite of specifications allowing cable operators to ultimately achieve 10 Gbps speeds downstream and 6 Gbps upstream. We’ve come a long way in over three decades! It’s been a privilege to see how many advancements have been produced in that timespan by CableLabs, together with its members and vendors, and just how much our efforts have changed the telecommunications world.

Do my four grandkids know and understand how CableLabs’ specifications development has helped them recently with online learning, or enabled my youngest granddaughter’s participation in her virtual kindergarten graduation—all by creating and supporting the best broadband services available? No, but that’s OK because we’re here working to continuously develop an innovative foundation for an ever-improving future for them.

Technology

Power your Product Roadmap with Perceptive Technologies

Did you get it? Are you sure? Missed and mixed signals are common problems with human interpretation, and perceptive technologies have the power to correct both. New advancements in perceptive technologies can help you design and deliver more compelling and personalized experiences, from entertainment to engagement, which will not only track how your end users are responding in real-time but also adjust accordingly.

Why should you care? Because your competitors are already incorporating emotion detection and personalized responses into their experience design. Customer service long ago adopted heat maps for callers to prioritize and route complaints, but there are less obvious and more compelling ways in which perceptive technologies can help advance your brand and product roadmap.

Small but Mighty

Sensors are the foundation of perceptive technologies and generate signals for real-time or asynchronous analysis. Sensors fall into a basic dichotomy. The distinctions between sensor types and use cases are significant, and the rapid emergence of new classes of virtualized sensors will have a significant impact on applications.

Hard Sensors

Hard sensors measure the physical attributes of an object. They can measure weight, vibration, pressure, touch, movement and so on. Lights in a room might sense your presence, for example, and dim or brighten accordingly. Soap and paper towel dispensers that sense your hand are quite common. Parking sensors in congested areas alert drivers to open spots, and you’ve probably also heard your car chirp at you when an obstacle is detected as you try to change lanes.

Common functions of hard sensors include the following:

- Environmental sensors measure light values, temperatures, air quality and so on

- Chemical sensors measure allergens and toxicants in the air

- Presence sensors measure the location and movement of objects

- Biometric sensors measure physiological attributes such as heart rate, blood pressure and glucose levels

Virtual Sensors

Virtual sensors, also referred to as soft sensors, allow for abstraction. Virtual sensors aggregate one or more sensor data streams and produce a derived output. They are powered by simple software analytics, change detection algorithms, and other feature detection techniques. They can recognize well-known patterns, as well as, atypical behaviors. You can imagine that virtual sensors can be extended to analyze voice recordings in real time to “hear” anger in someone’s voice, or to analyze real-time video to “see” sadness in a detected frown.

Emotion Sensing—Powered by AI

The human emotional state is never static, which makes the task of sensing an evolving emotional state highly complex. Multi-modal signals derived via speech and image recognition, natural language understanding, biometrics and other AI techniques that detect abnormalities relative to established baselines are key. When combined in analysis, they provide the subtle cues about a person’s intention and state of mind as well as their physical, psychological and emotional well-being. Want to detect sarcasm in your teenaged children? New solutions might soon help you.

Multi-modal input data types include the following:

- Spoken words (natural language processing)

- Voice tone (prosody)

- Facial recognition (emotion)

- Hand gestures

- Gaze and focus

- Body language/posture

- Dynamic physical behavior

- Spatial proximity

- Excitement and stress levels (heart rate, pupil dilation)

Emotion sensors are already on the market. Affectiva, for example, is a leading provider of “Emotion as a Service.” The company provides Software Development Kits (SDKs) that analyze facial expressions, word choice and vocal patterns to indicate emotions such as sadness, anger, happiness, confusion and so on. Affectiva has enabled the next generation of immersive experience developers to create authentic and emotionally rich games through its integration with the Unity platform.

Emotional Disambiguation

Let’s go back to the notion of sarcasm. The same comment delivered in the same way could mean very different things from different speakers or even the same speaker in different moods. Context is critical. An individual’s life experience, use of vocabulary, implicit and explicit social cues, biases, psychology, cultural norms and non-universal nuances all shape context.

AI systems are getting smarter in matters of the human psyche. Automated systems will eventually be able to project empathy, and simple matters such as detecting a genuine versus a sarcastic “thanks” will be possible too. Keep in mind that although humans can exhibit defensive behavior, AI systems won’t. Think you adequately resolved a crucial disagreement? Sensors can help augment your understanding of the other party and eliminate interpretive error.

Applications of Perceptive Technologies

Conversational AI

The current era of communication with virtual assistants was ushered in with chatbots and the convergence of machine learning, speech recognition and natural language processing. Today’s commonly referenced voice assistants, Siri and Alexa, are one dimensional—designed for a specific kind interaction or task, such as finding directions, simple queries like weather forecast, order placement and so on. They are limited.

As perceptive technologies mature, expect new immersive experiences to incorporate conversational AI techniques that can sense intention and emotions in a real-time context and respond appropriately. New experiences on the horizon will include virtual scholars teaching seminars, virtual therapists that are available 24x7 and virtual personal shoppers who know your preferences and real-time body specifications.

Active Storytelling – Story-Specific AI Agents

Today, you might sit back and watch a story evolve. In the future, the story will choose its flow and finale based on how you respond and appear to be feeling. Sensors that read the audience will help direct an AI agent to alter the story experience. AI agents continue to evolve in their ability to mimic individuals (like actors and celebrities) and generate two-way conversation, although fidelity of impersonation is still work in process.

“What a Finale!”—A Personalized Surprise Twist

Game designers have been introducing AI agents as non-player characters for some time. Incorporation of emotional intelligence into character design is further evolving, and the University of California at Santa Cruz’s Expressive Intelligence Studio is at the forefront.

Intelligent Assistants and Companion Robots

Smart speakers hit their stride in 2017. Today, one in six Americans owns one. Although it was spoofed on Saturday Night Live, there is truth to Alexa helping seniors stay socially connected. The intuitive voice-first interface with its ever-evolving set of services—entertaining games and content, on-demand video collaboration, Alexa-to-Alexa messaging and helpful reminders—significantly enhances their daily lives. Bloomberg has reported that Amazon is working on a mobile Alexa, a sort of social robot that would enable more personalized experiences.

The next generation of personal social companion robots are likely to sense your state of mind, learn your likes and dislikes, monitor your daily routines, perform basic household chores and even entertain you. In some markets, robots have been anthropomorphized by owners. Japan’s population has taken to “physical” robotic companions such as Paro and Kirobo, which can forge emotional connections with people of all ages and help avert feelings of isolation and depression. Cozmo, the charming and playful toy robot produced by Anki, was designed to change its behavior and grow with its owner as it forges an emotional attachment.

Forging Real Connections with Social Companion Robots

Predictive-Sensitive Homes

How are you feeling? Your house may soon be able to tell you. With an integrated array of sensors and cloud-based AI machine-learning algorithms, predictive-sensitive homes will monitor you and your loved ones’ baseline behaviors. Through a combination of sensor types, important changes such as reduced mobility, symptoms of the onset of dementia, anger and fear, anxiety and depression will be detected earlier and with greater accuracy than self-reporting or human observation. Environmental, floor and behavioral sensors will be able to mood wash the home to reflect or influence your state of mind.

Product and Talent Implications

Missed signals won’t entirely be a thing of the past, but sensor-stimulated empathy will undoubtedly be a thing of the future. Building perceptive technologies into your product roadmap will require a considerable amount of user testing and adoption smoothing. Acceptance of these types of technologies and their implications ranges considerably across demographic and psychographic cohorts and use cases. It will be critical to articulate the value of monitoring and the uses of data generated as well, so that end users embrace new solutions.

Sophisticated product teams are already bolstering their roadmaps with sensors and sensor-driven data, powered by new networks and platform capabilities. Working with perceptive technologies early and training your systems to get smarter with them will give your company an early advantage. Seek out those experienced in AI, psychographics, mechatronics, human-robot interaction design, privacy-security, and futurists to power your product roadmap with perceptive technologies. Interested in collaborating with us on this topic? Reach out to the CableLabs' Market Development department.

Take a look at how some of these perceptive technologies will come to life here in this 2017 CableLabs' vision-casting video: The Near Future: A Better Place.

In the next installment of our Emerging Technology Timeline, we will discuss how professions will be reimagined in a world where emerging technologies are dramatically impacting companies, customers and employees.

About the Authors

Anju Ahuja

In our ever-evolving marketplace, Anju believes that taking a “Future Optimist” approach to solving challenging problems manifests solutions that benefit both the individual and the enterprise. Today Anju takes this approach to answer questions for emerging technologies like AR, VR, MR, AI and how they will work with traditional media, communications and the broader global cable industry. As Vice President of Market Development and Product Management, Anju leads the team whose charge is to enable transformative end user experiences, and revolutionize the delivery of new forms of content, while also unleashing massive monetization opportunities. Anju also serves on the Board of Directors of Cable & Telecommunications Association for Marketing (CTAM) as well as the President’s Advisory Council of Northern California Women in Cable Telecommunications (WICT). She is a Silicon Valley Business Journal Women of Influence 2018 honoree.

Martha Lyons

Inventor, Futurist and Technologist, Martha Lyons is the Director of Market Development at CableLabs. With a wide-ranging career at Silicon Valley high tech companies and non-profits, Martha has over two decades of experience in turning advanced research into reality. A leading authority in the initiation and development of first of kind solutions, her current focus is the identification of industry-leading opportunities for the Cable industry. She is personally interested in how advances in the areas of intelligent agents, Blockchain, bioengineering, novel materials, nanotech and holographic displays will create opportunities for disruptive innovation, to the delight of end users, in industries ranging from healthcare, retail, and travel to media and entertainment. When Martha is not inventing the future, she enjoys disconnecting from technology and spending time outdoors, preferably near some body of water.

Technology

Immersive Media: Emerging Technology Timeline

You can find the first installment of this series "Emerging Technologies: New and Compelling Use Cases" here and the second installment "Catalysts and Building Blocks: Emerging Technology Timeline" here.

The Content Game vs. The Experience Game

Are you in the content game or are you in the experience game? This is not a philosophical question. This is about goal-setting for you and your team, given the new wave of emerging technologies that are transforming experience design. We believe content creators, from video to game design, and virtually every type of storyteller in between, will need to transform themselves into immersive experience designers.

Why? Because the future of media and entertainment will no longer be about consumption alone. It will be about immersion and interactivity. Your audience will be more than witnesses to your work; they will be part of your experience. If this sounds abstract, here’s a glimpse of what is already changing in part 3 of our Emerging Technology Timeline series.

Coming Soon to an Experience for You

From a practical point of view, the way in which we capture, process, present, and experience content will be changing and evolving as well. Here are five things to watch:

- Immersive story-building engines, which enable the creation of new sensory environments and curated immersive content, will be interactive, adaptive, and enable non-linear storylines

- New form factors for displays — flexible, transparent, wearable, and/or holographic — will emerge

- Ultra-high definition panoramic cameras will enable the capture of 360 degree and light field video streams

- The ability to distribute and display light fields with six degrees of freedom (6DOF) will enable users to move around naturally in VR

- Instantaneous feedback and interaction within mixed reality environments will be powered by haptics and ambient types of human-computer interfaces

Your Living Room is About to Change, Too

The aforementioned technologies, and many more, will enhance and change the way we engage, entertain, create, communicate and share. Expect a new set of platforms and advanced authoring tools to enable next-generation media and entertainment.

Remote will feel as close as next door, and improvements to the network will improve responsiveness and reduce lag, creating a seamless exchange. Enhancements in interactivity between the end user and the experience as well as the environment will make even the most mundane information come to life, increasing retention and usability.

Virtual Family Dinner

Your living room will become an experience zone, in which emerging technologies such as holodecks will enable you to experience virtual travel and exploration, to participate virtually in extreme sports, to train and practice in simulated real-world environments, and to virtually transport yourself to environments of all kinds.

Your Walls Will Be "Windows"

Transparent displays will exist within walls, shelving, doors, and windows in your home. Subtle, glass-like and tinted, they will transform into immersive displays. They will provide "windows" for experience sharing via telepresence.

You’ll be able to visit exotic locations without leaving your living room, and dine or socialize with far away friends. Those with disabilities and reduced mobility will be liberated from the physical barriers that restrict travel and experiences beyond their four walls today.

Virtual Wine Tasting

The Emergence of Large-scale Experience Zones

Theme parks are no longer just about rides. They are becoming layered experience zones — layers for different cohorts of different ages and interests. Movie theaters are embracing VR. Game companies, who pride themselves on sophisticated physics engines and interactivity, are working with movie studios to immerse viewers as well. Look no further than the launch of Disney's StudioLAB as an example of a studio re-imagining films as immersive multi-platform experiences.

Immersive Monopoly

Better than Box Seats

Wish you were on the 50-yard line at the Super Bowl...every year? New technologies will mimic the live experience with fidelity and even allow you to choose your vantage point and zoom in on the action. Advertising in this new world will be designed with different parameters and for increased participation by the audience in a shared experience versus a static ad view.

"Here and Now" vs. "There and Then"

Feeling nostalgic? Wondering what it was like at a nightclub in the roaring twenties? The "Spotify of the Future" will incorporate richer elements of the musical experience beyond audio. Experience creators will get their chance to revive history and immerse end users through simulated time travel.

Take a tour of the fashions of the time, walk the streets and observe the environment. Adding other sensory-rich elements to these experiences, such as smells and textures, is sure to enhance the journey, and we believe that those capabilities will be on the horizon soon too.

The Roaring Twenties – a "Vintage" Experience

Educators, Put Down the Chalk, and Turn This On!

Educators, emerging technologies will shape your teaching too. New holographic displays will enable educators and experts to create rich and immersive teaching experiences and deliver personalization to students within a shared lesson plan.

How? "Storytelling" tools and new platforms will facilitate development and delivery. Students will study space and geography while immersing themselves in nuanced environments. Companies such as Pearson are paving the way with early trials in this area. And if you missed it, last year, Light Field Lab announced they were developing glasses-free holographic TVs.

Take a look at how your home might evolve in this 2016 CableLabs vision-casting video below: Near Future.

Leveling Up in the Experience Game

This revolution will not just affect end users and their experiences, it will impact the entire value chain of experience design — from development and delivery through monetization — as well as the ecosystem of storytellers, developers, studios, distributors and advertisers.

Why are we tracking this market with so much fervor? We believe the network will power the platform of the future, and we are developing the required capabilities for end users and developers. From the gaming ecosystem to healthcare and education, we are working with developers with advanced experience roadmaps to enable their success and to transform your home into a next-generation experience zone.

Visit the Emerging Technology Timeline to learn more. Reach out to the CableLabs' Market Development team to collaborate. In our next installment on this series, we will discuss the impact of Perceptive Technologies in transforming end user experiences.

About the Authors

Anju Ahuja

In our ever-evolving marketplace, Anju believes that taking a “Future Optimist” approach to solving challenging problems manifests solutions that benefit both the individual and the enterprise. Today Anju takes this approach to answer questions for emerging technologies like AR, VR, MR, AI and how they will work with traditional media, communications and the broader global cable industry. As Vice President of Market Development and Product Management, Anju leads the team whose charge is to enable transformative end user experiences, and revolutionize the delivery of new forms of content, while also unleashing massive monetization opportunities. Anju also serves on the Board of Directors of Cable & Telecommunications Association for Marketing (CTAM) as well as the President’s Advisory Council of Northern California Women in Cable Telecommunications (WICT). She is a Silicon Valley Business Journal Women of Influence 2018 honoree.

Martha Lyons

Inventor, Futurist and Technologist, Martha Lyons is the Director of Market Development at CableLabs. With a wide-ranging career at Silicon Valley high tech companies and non-profits, Martha has over two decades of experience in turning advanced research into reality. A leading authority in the initiation and development of first of kind solutions, her current focus is the identification of industry-leading opportunities for the Cable industry. She is personally interested in how advances in the areas of intelligent agents, Blockchain, bioengineering, novel materials, nanotech and holographic displays will create opportunities for disruptive innovation, to the delight of end users, in industries ranging from healthcare, retail, and travel to media and entertainment. When Martha is not inventing the future, she enjoys disconnecting from technology and spending time outdoors, preferably near some body of water.

Technology

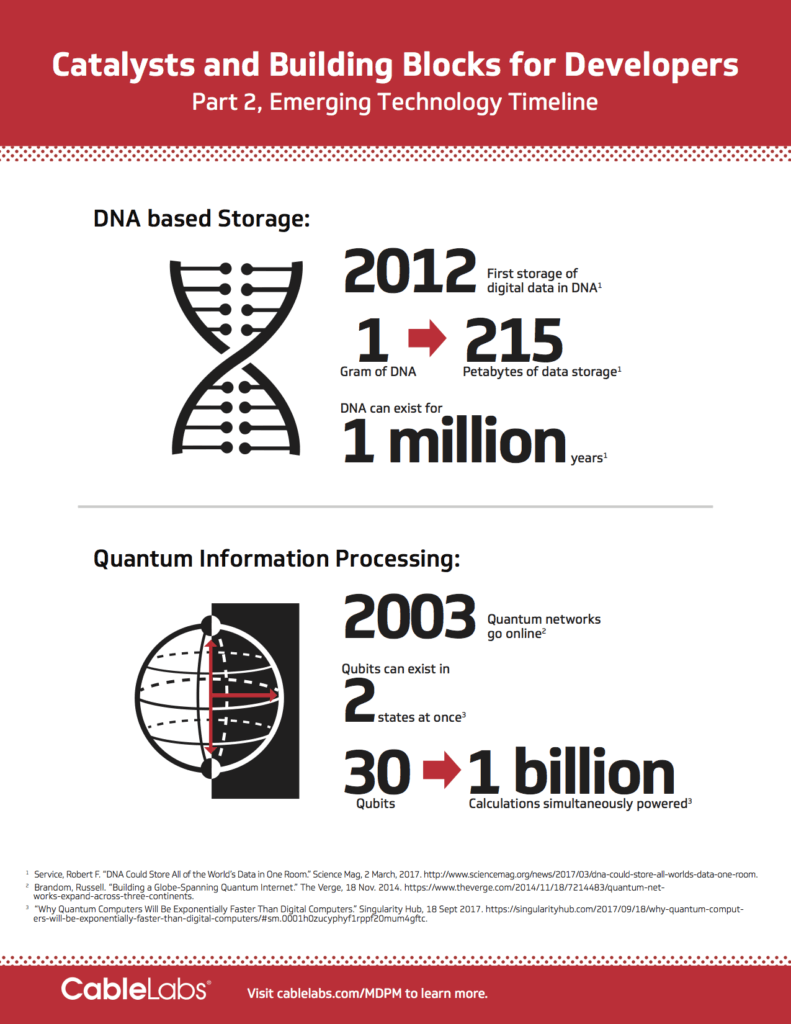

Catalysts and Building Blocks: Emerging Technology Timeline

App Developers—welcome to your future. Significant advances in the sciences and quantum computing will allow you to create and deliver breakthrough experiences in a highly secure fashion more quickly. While the collapse of Moore's law may worry some, advances in fundamental science and technology will continue to enable exponential shifts in the solutions landscape. Near instantaneous processing speeds and intelligence at the edge will allow you to accomplish interactivity and deliver advanced services that would have previously been clunky at best or altogether impractical. The era of enhanced productivity powered by the network is here.

In part 2 of our Emerging Technology Timeline series, we continue along the innovation arc, discussing the implications of a host of new technologies and solutions built upon them, which are expected to drive fundamental shifts in the economy and society.

Some of what intrigues us:

- Bending the rules, literally, with metamaterials. Human engineered metamaterials supplementing and eventually supplanting natural resources to enable game-changing results in a broad range of industries, including but not limited to communications, healthcare, the military, and athletic apparel.

- A more secure world. New quantum information processing (QIP) techniques, combining computer science with quantum physics to accelerate information processing, and processing of complex algorithms, where classical computing would fail.

- Synthetic DNA based data storage. Biologically inspired, synthetic DNA, becoming the archival medium of choice to store the tsunami of data created year after year.

- Self-organizing networks: Self-organizing “SWARM” networks, inspired by nature, which can learn to optimize resources on their own.

Not impressed yet?

The power is actually combinatorial. Multiple catalysts combined in new ways will change your capacity to innovate and ignite activity at all levels. How do we know? It’s happened again and again. Look no further than last year for a prime example—2017 witnessed a substantive start for industries to introduce voice interfaces into their solutions and AI-based personal assistants into their customer support functions. Powered by advances in natural language processing and speech recognition, Google and others now achieve a 95% + speech recognition accuracy rate. Imagine what will come by coupling new emotion detection algorithms, which sense everything from inflections in voice to non-verbal facial cues.

What catalysts will stand out in the coming years? Some of the Catalysts & Building Blocks detailed below are merely the beginning.

Quantum Information Processing

Quantum Information Processing (QIP) will allow app developers to solve problems faster than ever before. Based on the novel encoding of data on qubits, QIP can solve combinational optimization problems much faster (and in an unconventional fashion) than classical information processing. With Quantum technologies, application developers will be able to build faster machine learning algorithms and analyze streams of data instantaneously. While qubits are inherently unstable, and their applications are in the early days of development, we believe the long-term potential is significant. The recent implementation of a quantum neural network (QNN) prototype by NTT, operating at room temperature, shows how QNNs can solve problems thousands of times faster than classical computers. Quantum Computers are expected to also enable significant advances in real-time and secure cryptographic key exchange. With capabilities like these, new real-time end user and business-oriented capabilities embedding complex security algorithms and artificial intelligence capabilities can be brought to market.

Biological Storage

Worried about the explosion of data and our ability to store it, replicate it at the edge, and secure it? Biology might have the answer. Biologically inspired storage systems will be able to hold petabytes of data encoded in “nucleotides” on a single gram of synthesized DNA. The actual numbers are astonishing: theoretically you could store one exabyte of data (equivalent to one billion gigabytes) per cubic millimeter. As a stable medium, DNA is also capable of preserving data for hundreds of thousands of years with minimal power.

Looking for more secure encryption of data? DNA encoded sequences may hold the key to a new paradigm of secure data storage, including cryptographic keys exchanged using Quantum Computers. While core problems still exist, such as the cost to write DNA and problems associated with accessing it efficiently, industry and academia are hard at work to bring DNA based storage into the mainstream.

Metamaterials

Countless new solutions and capabilities will be enabled by metamaterials, designed to exhibit chemical and physical properties not found in nature, courtesy of clever engineering. These materials, which will be stronger, more flexible, and lighter than ever before will enable advances in antennas and communications technologies, immersive displays, bio-sensing capabilities, and stealth “cloaking” applications. Recall Harry Potter’s invisibility cloak. Similar “cloaking capabilities” will become a commercial reality. Healthcare applications and services will also be built on biosensors comprised of sensitive “Metasurfaces” which can detect biomarkers in the early detection of certain cancers.

Imitation in Action

UC Berkeley’s Swarm Lab is examining what can be leveraged from the world of nature into future theories for data flow and network efficiency. Based on the swarm behavior of animal species, like ants, herds or flocks of birds, swarm networking aims to enable the network itself to make better real-time, autonomous decisions (improving efficiency and QoS).

Capitalizing on the Art of Integration

The ability to integrate these emerging technologies and apply them to use cases and business solutions beyond the sciences is not only compelling, rather it suggests app developers will want to staff up with core scientists, develop partnerships with labs, or find other means of not only staying abreast of developments but also harnessing their true potential. While new networks will emerge and support advanced solutions, the importance of the ecosystem and collaboration across industries is essential. CableLabs is developing the network requirements and new architectures to enable the rapid adoption of these technology enablers by solution developers like you. To see the entire landscape of catalysts we’ve profiled, visit our Emerging Technology Timeline. In our next installment on this series, we will discuss the impact of emerging technology in immersive media and the potential for new end user experiences.

What will you do with the plethora of new technologies? How will you harness their potential in your product roadmap? Want to collaborate with a global innovation lab powering the next generation of applications on the network?

Contact us today and find out how we can help you develop these technologies which will fundamentally change the way your end users behave and the way you will interact with them.

About the Authors

Anju Ahuja

In our ever-evolving marketplace, Anju believes that taking a “Future Optimist” approach to solving challenging problems manifests solutions that benefit both the individual and the enterprise. Today Anju takes this approach to answer questions for emerging technologies like AR, VR, MR, AI and how they will work with traditional media, communications and the broader global cable industry. As Vice President of Market Development and Product Management, Anju leads the team whose charge is to enable transformative end user experiences, and revolutionize the delivery of new forms of content, while also unleashing massive monetization opportunities. Anju also serves on the Board of Directors of Cable & Telecommunications Association for Marketing (CTAM) as well as the President’s Advisory Council of Northern California Women in Cable Telecommunications (WICT). Silicon Valley Business Journal Women of Influence 2018 honoree.

Martha Lyons

Inventor, Futurist and Technologist, Martha Lyons is the Director of Market Development at CableLabs. With a wide-ranging career at Silicon Valley high tech companies and non-profits, Martha has over two decades of experience in turning advanced research into reality. A leading authority in the initiation and development of first of kind solutions, her current focus is the identification of industry-leading opportunities for the Cable industry. She is personally interested in how advances in the areas of intelligent agents, Blockchain, bioengineering, novel materials, nanotech and holographic displays will create opportunities for disruptive innovation, to the delight of end users, in industries ranging from healthcare, retail, and travel to media and entertainment. When Martha is not inventing the future, she enjoys disconnecting from technology and spending time outdoors, preferably near some body of water.

Technology

Emerging Technologies: New and Compelling Use Cases

How will emerging technologies impact industries powered by communication networks? What will this mean for your customers and end users?

In our annual Emerging Technology Timeline (ETT), we highlight provocative new technologies that will impact the development of novel solutions and the ecosystems they serve. Are you a product tsar, strategist or developer who relies on the power of the network to deliver solutions or launch new applications? If so, then this series is written for you by the lab that is inventing networks of the future.

With hundreds of technologies in 7 sections, our timeline covers the present to 2023 and beyond. In this introduction, we touch upon some of the major themes that influenced our timeline: the body, professions reimagined, changing lifestyles and urban design.

Longer Better Lives: The Bionic You

Embellishment of the body with new and impactful technologies is putting society on the verge of a Cyber Human Revolution. Like the Industrial Revolution, this will lead to massive shifts in how we live, work and play.

In a biometrically-connected world, people will be able to better monitor their health, stress and overall well-being, helping them "find their Zen" before they’d otherwise know they need it. Innovations will emerge that enable people to overcome their disabilities: the deaf will be able to hear, the blind see, and the physically disabled walk. For many more, our well-being and day-to-day experience will be enhanced by:

- Smart diagnostic clothing

- Hearables and smart contact lenses

- Powered exoskeletons and second skins

- Ingestible robots

- Bioacoustic sensing

- Neuro-enhanced interfaces

Unlike the Industrial Revolution, the Cyber Human Revolution will see technology enable consumer and individual choice, bringing power back to labor and lifestyle in new and unusual ways. Expect the above to transform lives and unleash individual capacity to participate, produce and perform in new ways.

Professions Reimagined, Lifestyles Unshackled

Changes to the body and personal productivity will also enable a diverse and larger workforce. Changes in how we live and move about will follow. Some people may opt to live off-grid part-or even full-time, enabled by sustainable power sources, energy storage systems, water collection and monitoring, repurposing of waste into productive materials and so on.

Computers with human-like capabilities will emerge, creating a new set of jobs. True telepresence will change the workplace and lifestyles of the labor force. Autonomous vehicles will repurpose commutes and allow asset-lite living. Expect the rapid adoption and deployment of the following catalysts:

- Artificial Intelligence and Machine Learning

- Invisible interfaces

- Commercial and companion robotics

- Virtual and Augmented Reality

- Blockchain decentralized asset management

Meanwhile, mega productivity centers will emerge, which are already taking root in some of the world’s fastest growing cities, and will appear as modern-day self-sufficient villages. These megacities will require new infrastructure and design with the network at the core.

The Sensor Driven World: Sentient Cities

The surge in data-driven activity has only begun. A convergence of technologies enabling Smart Cities are on an accelerated growth curve. We believe the future urban landscape will not only be "smart;" it will be auto-adaptive via artificial intelligence and sensors embedded within the network as well as within services upon the network. The most progressive cities will appear like adaptive organisms. Expect the following to be pervasive and increasingly critical to lives and workforce productivity:

- Terabit speeds

- Intelligent bots

- Proliferation of tiny sensors

- Virtual, mixed and augmented reality

- A confluence of new services that leverage precise network capabilities

Municipalities must be increasingly futuristic and work with communications service providers to build cities of the future. Cities must respond to the increasing demands of enterprises and residents by embedding intelligence and new networks in future design. We further speculate that just as cities are competing for growth engine enterprises like Amazon, they may have to compete for talented and skilled residents in the future too. A new landscape of applications and service providers will emerge, and we are ready to enable them.

CableLabs: Leading the Way to the Networks of the Future

Our technologists and product managers are developing innovations across wired and wireless technologies, network architectures, security and artificial intelligence. Rapidly adopted innovations are often best developed across ecosystems, and we collaborate across industries dependent upon the network of the future to unleash their potential. If your solution or service depends upon advanced networks, you may be experiencing challenges related to the network or falling short with your customer experience. Our innovation lab is dedicated to removing these pain points and obstacles to your success.

Emerging Technology Timeline Part 2 and Beyond

Each month, we will bring you a new view of our Emerging Technology Timeline in the sequence below:

Think big about your product roadmap and unlock your industry's long-term potential. Check back soon for a new view of how emerging technologies and experiences will affect you and your enterprise. Not enough? Reach out to the authors to learn more.

---

About the Authors

Anju Ahuja

In our ever-evolving marketplace, Anju believes that taking a “Future Optimist” approach to solving challenging problems manifests solutions that benefit both the individual and the enterprise. Today Anju takes this approach to answer questions for emerging technologies like AR, VR, MR, AI and how they will work with traditional media, communications and the broader global cable industry. As Vice President of Market Development and Product Management, Anju leads the team whose charge is to enable transformative end-user experiences, and revolutionize the delivery of new forms of content, while also unleashing massive monetization opportunities. Anju also serves on the Board of Directors of Cable & Telecommunications Association for Marketing (CTAM) as well as the President’s Advisory Council of Northern California Women in Cable Telecommunications (WICT).

Martha Lyons

Inventor, Futurist and Technologist, Martha Lyons is the Director of Market Development at CableLabs. With a wide-ranging career at Silicon Valley high tech companies and non-profits, Martha has over two decades of experience in turning advanced research into reality. A leading authority in the initiation and development of first of kind solutions, her current focus is the identification of industry-leading opportunities for the Cable industry. She is personally interested in how advances in the areas of intelligent agents, Blockchain, bioengineering, novel materials, nanotech and holographic displays will create opportunities for disruptive innovation, to the delight of end users, in industries ranging from healthcare, retail, and travel to media and entertainment. When Martha is not inventing the future, she enjoys disconnecting from technology and spending time outdoors, preferably near some body of water.

Technology

Towards the Holodeck Experience: Seeking Life-Like Interaction with Virtual Reality

By now, most of us are well aware of the market buzz around the topics of virtual and augmented reality. Many of us, at some point or another, have donned the bulky, head-mounted gear and tepidly stepped into the experience to check it out for ourselves. And, depending on how sophisticated your set up is (and how much it costs), your mileage will vary. Ironically, some research suggests that it’s the baby boomers who are more likely to be “blown away” with virtual reality rather than the millennials who are more likely to respond with an ambivalent “meh”. And, this brings us to the ultimate question that is simmering on the minds of a whole lot of people: is virtual reality here to stay?

It’s a great question.

Certainly, the various incarnations of 3D viewing in the last half-century, suggest that we are not happy with something. Our current viewing conditions are not good enough, or … something isn’t quite right with the way we consume video today.

What do you want to see?

Let’s face it, the way that we consume video today is not the way our eyes were built to record visual information, especially in the “real-world”. Looking into the real world (which, by the way, is not what you are doing right now) your eyes capture much more information than the color and intensity of light reflected off of the objects in the scene. In fact, the Human Visual System (HVS) is designed to pick up on many visual cues, and these cues are extremely difficult to replicate both in current generation display technology, and content.

Displays and content? Yes. Alas, it is a two-part problem. But let’s first get back to the issue of visual cues.

What your brain expects you to see

Consider this, for those of us with the gift of sight, the HVS provides roughly 90% of the information we absorb every day, and as a result, our brains are well-tuned to the various laws of physics and the corresponding patterns of light. Put more simply, we recognize when something just doesn’t look like it should, or when there is a mismatch between what we see and what we feel or do. These mismatches in sensory signals are where our visual cues come into play.

Here are some cues that are most important:

- Vergence distance is the distance that the brain perceives when the muscles of the eyes move to focus at a physical location, or focal plane. When that focal plane is at a fixed distance from our eyes, let’s say, like with the screen in your VR headset, then the brain is literally not expecting for you to detect large changes in distance. After all, your eye muscles are fixed at looking at something that is physically attached to your face, i.e. the screen. But, when the visual content is produced in a way so as to simulate the illusion of depth (especially large changes in depth) the brain recognizes that there is a mismatch between the distance information that it is getting from our eyes vs. the distance it is trained to receive in the real world based on where our eyes are physically focused. The result? Motion sickness and/or a slew of other unpleasantries.

- Motion parallax: As you, the viewer, physically move, let’s say walk through a room in a museum, then objects that are physically closer to you should move more quickly across your field of view (FOV) vs. objects that are farther away. Likewise, objects that are positioned farther away should move more slowly across your FOV.

- Horizontal and vertical parallax: Objects in the FOV should appear differently when viewed from different angles, both from changes in visual angles based on your horizontal and vertical location.

- Motion to photon latency:. It is really unpleasant when you are wearing a VR headset and the visual content doesn’t change right away to accommodate the movements of your head. This lag is called “motion to photon” latency. To achieve a realistic experience, motion to photon latency must be less than 20ms, and that means that service providers, e.g. cable operators, will need to design networks that can deterministically support extremely low latency. After all, from the time that you move your head, a lot of things need to happen, including signaling head motion, identifying the content consistent with the motion, fetching that content if not already available to the headset, and so on.

- Support for occlusions, including the filling of “holes”. As you move through, or across, a visual scene, objects that are in front of or behind other objects should block each other, or begin to reappear consistent with your movements.

It’s no wonder…

Given all of these huge demands placed on the technology by our brains, it’s no wonder that current VR is not quite there yet. But, what will it take to get there? How far does the technology still have to go? Will there ever be a real holodeck? If “yes”, when? Will it be something that we experience in our lifetimes?

The holodeck first appeared properly in Star Trek: The Next generation in 1987. The holodeck was a virtual reality environment which used holographic projections to make it possible to interact physically with the virtual world.

Fortunately, there are a lot of positive signs to indicate that we might just get to see a holodeck sometime soon. Of course, that is not a promise, but let’s say that there is evidence that content production, distribution, and display are making significant strides. How you say?

Capturing and displaying light fields

Light fields are 3D volumes of light as opposed to the ordinary 2D planes of light that are commonly distributed from legacy cameras to legacy displays. When the HVS captures light in the natural world (i.e. not from a 2D display), it does so by capturing light from a 3D space, i.e. a volume of light being reflected from the objects in our field of view. That volume of light contains the necessary information to trigger the all-too-important visual cues for our brains, i.e. allowing us to experience the visual information in a way that is natural to our brains.

So, in a nutshell, not only does there need to be a way to capture that volume of light, but there also needs to be a way to distribute that volume of light over a, e.g. cable, network, and there needs to be a display at the end of the network that is capable of reproducing the volume of light from the digital signal that was sent over the network. A piece of cake, right?

Believe it or not

There is evidence of significant progress on all fronts. For example, at the F8 conference earlier this year, Facebook, unveiled its light field cameras, and corresponding workflow. Lytro is also a key player in the light field ecosystem with their production-based light field cameras.

For the display side, there is Light Field Lab and Ostendo, both with the mission to make in-home viewing with light field displays, i.e. displays that are capable of projecting a volume of light, a reality.

On the distribution front, both MPEG and JPEG have projects underway to make the compression and distribution of light field content possible. And, by the way, what is the digital format for that content? Check out this news from MPEG’s 119th meeting in Torino:

At its 119th meeting, MPEG issued Draft Requirements to develop a standard to define a scene representation media container suitable for interchange of content for authoring and rendering rich immersive experiences. Called Hybrid Natural/Synthetic Scene (HNSS) data container, the objective of the standard will be to define a scene graph data representation and the associated container for media that can be rendered to deliver photorealistic hybrid scenes, including scenes that obey the natural flows of light, energy propagation and physical kinematic operations. The container will support various types of media that can be rendered together, including volumetric media that is computer generated or captured from the real world.

This latest work is motivated by contributions submitted to MPEG by CableLabs, OTOY, and Light Field Labs.

Hmmmm … reading the proverbial tea-leaves, maybe we are not so far away from that holodeck experience after all.

--

Subscribe to our blog to read more about virtual reality and more CableLabs innovations.

Technology

5G For All: The Need for Standardized 5G Technologies in the Unlicensed Bands

Wherever you turn in the wireless ecosystem today, 5G is the buzzword and the popular kid on the block… well, at least in some blocks. 3GPP, a third generation partnership project that defines specifications for GSM networks and radio access technologies, is working on developing the 5G standards at an accelerated pace, thus emphasizing the importance of 5G in the evolution of mobile networks. But, what is missing in the picture, is an equal emphasis and urgency in developing standardized 5G solutions for the unlicensed bands.

--

According to the FCC:

Unlicensed Spectrum: “In spectrum that is designated as "unlicensed" or "licensed-exempt," users can operate without an FCC license but must use certified radio equipment and must comply with the technical requirements, including power limits, of the FCC's Part 15 Rules. Users of the license-exempt bands do not have exclusive use of the spectrum and are subject to interference.”

Licensed Spectrum: “Licensed spectrum allows for exclusive, and in some cases non-exclusive, use of particular frequencies or channels in particular locations. Some licensed frequency bands were made available on a site-by-site basis, meaning that licensees have exclusive use of the specified spectrum bands in a particular point location with a radius around that location.”

--

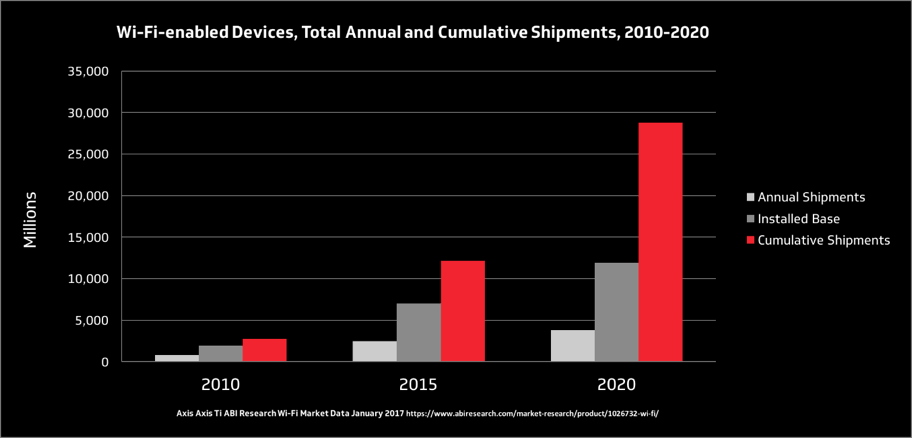

The unlicensed spectrum has a history of delivering connectivity to the masses at unparalleled scales and economies. Taking Wi-Fi as a proxy for the unlicensed spectrum, by 2020, it is expected that the total shipment of Wi-Fi devices will have a user base of nearly 12 billion devices and the total shipments of Wi-Fi devices will surpass a whopping 28 billion. (Note: world population is forecasted to be 7.7 billion in 2020!).

One of the fundamental drivers of the success of Wi-Fi is its use of unlicensed spectrum because the innovation enables the availability of Wi-Fi for everyone with a significantly lower cost and complexity of deploying a wireless network. Additionally, unlicensed spectrum plays a critical role in the success of licensed spectrum technologies. In 2016, 60% of mobile traffic was offloaded to the unlicensed spectrum. This means that mobile networks had to increase capacity by 250% if offloading to unlicensed spectrum was not viable. However, the user experience when switching between mobile networks and Wi-Fi has not been ideal due to a lack of interoperability.

3GPP is now looking at enabling 5G technologies in the unlicensed bands; specifically, 3.5 GHz, 5 GHz and 60 GHz. The study led by Qualcomm was approved in March of 2017, with the results expected to be handed off to 3GPP in June of 2018 for review. While this is exciting news for fans of unlicensed spectrum, it comes at a slower pace than its licensed spectrum counterpart. It is expected that 3GPP will finalize the licensed spectrum Non-Standalone (NSA) 5G enhanced mobile broadband specifications by March of 2018, thus enabling 5G network deployments in early 2019.

Although the schedule is not ideal, it is a step in the right direction. The popularity of the unlicensed spectrum (2.4 GHz and 5 GHz) has driven high levels of congestion. With the continuous increase in user demand, spectrum depletion is a real risk. Fortunately, the 60 GHz spectrum offers a huge swath of underutilized spectrum that is ripe for deploying 5G standalone networks within it. The 60 GHz band has 14 GHz of available spectrum (57 GHz – 71 GHz), which on its own is larger than all the licensed spectrum that is being considered for 5G networks, including licensed spectrum for 2G/3G/4G mobile networks!

We have addressed the business case for 5G technologies in the unlicensed band, but what’s in it for the end user? The ability to make high-speed low latency wireless networks widely available has the potential to significantly disrupt what’s possible in our everyday lives, such as in education, healthcare, transportation, commerce, the way we work, entertainment, and most importantly, the way people connect with each other. Additionally, the availability of high-speed low latency wireless networks enables a new platform for innovation, on which applications we have yet to think of will be developed. The possibilities are endless and limited only by our imagination. As an example, take a look at our short video The Near Future: A Better Place here.

Harnessing the capabilities of 5G technologies and coupling it with unlicensed spectrum so that users can enjoy a seamless wireless experience across licensed and unlicensed bands is one of those truly rare instances where 1 + 1 = 3. What we cannot afford is to not drive standardized 5G technologies in the unlicensed band at a fast pace, because we would miss out on what the coupling of 5G and unlicensed spectrum has to offer.

--

You can find out more about what CableLabs is doing in this space by reading our Inform[ED] Insight on 5G here. Subscribe to our blog to find out more about 5G in the future.

Technology

Introduction to Proactive Network Maintenance (PNM): The Importance of Broadband

This is the introduction for our upcoming series on Proactive Network Maintenance (PNM).

The advent of the Internet has had a profound impact on American life. Broadband is a foundation for economic growth, job creation, global competitiveness and a better way of life. The internet is enabling entire new industries and unlocking vast new possibilities for existing ones. It is changing how we educate children, deliver health care, manage energy, ensure public safety, engage government and access, organize and disseminate knowledge.

There is a lot riding on broadband service which places a focus on customer service; to create both a faster and more reliable broadband experience that delight customers. Recent technological advancements in systems and solutions, as well as agile development, have enabled new cloud-based tools to enhance customer experience.

Over the past decade, CableLabs has been inventing and refining tools to improve the experience of broadband. CableLabs is providing both specifications and reference designs to interested parties to improve how customers experience their broadband service. Proactive Network Maintenance (PNM) is one of these innovations.

What is Proactive Network Maintenance and Why Should You Care?

Proactive network maintenance (PNM) is a revolutionary philosophy. Unlike predictive, or preventive maintenance, proactive maintenance depends on a constant and rigorous inspection of the network to look for the causes of a failure, before that failure occurs, and not treating network failures as routine or normal. PNM is about detecting impending failure conditions followed by remediation before problems become evident to users.

In 2008 the first instantiation of PNM was pioneered at CableLabs. This powerful innovation used information available in each cable modem and mathematically analyzed it to identify impairments in the coax portion of the cable network. From this time forward, every cable modem in the network is a troubleshooting device and could be used as a preventive diagnostic tool.

This is important when trying to track down transient issues related to the time of day, temperature, and other environmental variables, which can play a huge role in the performance of the cable system. With transient issues, it is important to have sensors continually monitoring the network. Since then, with improvements in technology, more sophisticated tools have been added giving operators unprecedented amounts of information about the state of the network.

Problems are solved quickly and efficiently because we can pinpoint where the problems are. Technicians like PNM because they become empowered to find and fix issues. An impairment originating from within a customer’s home can be dispatched to a service technician. While impairments originating on the cable plant itself can be dispatched to line technicians. Customer service agents also like the tools because they create actionable service requests. Lastly, impairments that can be attributed to headend alignment issues can be routed to a headend technician. All of this can be done before the customer is even aware there is a problem!

So, what does CableLabs have to do with all this?

The PNM project continues to innovate. Because of the success of PNM for the cable network, capabilities have been added to investigate in-home coax, WiFi and soon fiber optic networks. Monitoring is key, and by using powerful cloud-based predictive algorithms and analytics, networks can be monitored 24 x 7 to provide insights, follow trends and detect important clues with the goal to identify, diagnose and fix issues before customers notice any impact.

CableLabs provides a toolkit of technical capabilities and reference designs that interested parties can use to create and customize tools fitting specific business needs. Operators can get started with reference designs, build expertise and their own solutions, and integrate the tools into their own systems. In addition, suppliers have licensed the technology and are creating a turn-key solution that operators can choose to work with.

--

In my upcoming series, I will cover DOCSIS PNM, MoCA PNM, Optical PNM, Common Collection Framework and explore in greater depth how PNM enhances the customer experience. Be sure to subscribe to our blog to find out more.

Consumer

Hello Blockchain . . . Goodbye Lawyers?

As the blockchain technology star begins to eclipse Bitcoin and the other cryptocurrencies that rely upon it, there has been an increase in research and development into using blockchain for “smart contracts.” Smart contracts are computer programs that facilitate, verify, execute, and enforce a contract. While smart contracts have existed to a limited extent for years in the commodities markets, vending machine, or adjustable rate mortgage industries, blockchain technology enables smart contracts to expand to cover new uses and, ultimately, become mainstream because contracts in blockchains are attestable, immutable, and visible.

What is a Contract? What is Blockchain?

A contract, in its simplest terms, is an agreement between people to do or refrain from doing something in exchange for something else. The agreement, generally, can be formed through a mutually signed document, a series of emails, verbal communication, clicking “I Agree” or any action showing agreement. A contract may be formed by the simple nodding of the head. A “person” can be a human being or an entity created in law with the ability to contract, like a corporation or a limited liability company. In order for an agreement to be upheld legally, the agreement cannot be against the law. Yet another reason why you don’t see drug dealers in court -- criminal court excepted.

Blockchain is a cryptographic technology that is used to create distributed, verifiable electronic ledgers to record events. For more elaboration refer to Steve Goeringer’s post. Smart contracts leverage blockchain technology to not only record the individuals and the amounts in the transaction but can also set up a self-executing “if this than that” structure using scripts.

A Smart Contract in Action

For example, if you and I were to agree upon a price for you to buy my car, we would both be worried about certain risks. I would be afraid whether or not your check will clear. You would be concerned if I actually hold a clear title to the car, whether the car is mechanically sound, if there are any liens on the car, and would want to confirm the odometer reading is accurately stated on the title. Addressing these concerns may take a week or more. With a blockchain, all the concerns are addressed simultaneously.

For this example, let’s assume we are living in the near future and the title (VIN number, ownership and odometer reading – the latter due to my car phoning in to update its secured record), and any liens are encoded on the blockchain, the payment will be in Bitcoin (or some other cryptocurrency), and my contract with the mechanic can also be on the blockchain. I would load the coded representation of our agreement onto the blockchain. The blockchain would immediately determine if you had the funds, I had the proper title, check for the “this car is okay” approval from the mechanic, check (and pay off) any liens and then, if all the conditions are present, transfer your payment to me, and transfer and record the title on the blockchain. We receive the mutual “okay” on our smart phones and I give you the car key. The sale of the car becomes spontaneous. So long as the cryptography is sound, there is no longer the need for trust. That is, I would not have to trust you to have the funds to purchase the car and you would not have to trust that I actually had title to the car, the car was mechanically sound, there were no liens on the car, and the odometer reading is correct.

Smart Contracts in the Cable Industry

Smart contracts can remove friction and provide transparency in the cable supply chain. For example, smart contracts could ensure that every time a cable operator shows a movie, appropriate payments are instantaneously made all the way down the programming supply chain. There is no need for audit as the transaction history is readily secured and apparent in the blockchain. Smart contracts could also reduce costs by streamlining content purchasing based upon industry standards. Smart contracts could also be readily applied to advertising insertion with payment made in real time.

So We Don’t Need Lawyers Anymore?

For certain simple transactions, for which you probably wouldn’t hire a lawyer, you still wouldn’t need a lawyer. However, the use of blockchain may reduce the need for a using a lawyer to resolve post-contract issues like lack of payment or, in the previous example, bad title. Smart contracts may reduce the need for litigators but become an additional tool for the transactional lawyer to master. The question may be better phrased as, “do lawyers need to learn coding along with Latin?” or “do coders need law degrees?” or even, “is coding a new role for paralegals?” Written contracts are legal documents and while you are free to draft your own, drafting for others constitutes practicing law without a license – which is against the law. While smart contracts will undoubtedly impact lawyers and the practice of law, smart contracts will not eliminate the need for lawyers right away. Smart contracts may, however, be one of several technologies that will bring us one step closer to eliminating or reducing the need for lawyers in the future.

Data

The Analog Monkey on Our Backs: Wrong-Thinking about Data over Cable

We inherit a lot of things from the past – not all of them good. When the telephone was invented by Elisha Grey, it evolved from wired telegraph lines. This was a natural evolution since the telegraph lines already existed and were proven, whereas radio technology was still in its infancy. When television was first introduced, it was radiated through the air to receivers in homes, as there were no cable systems initially, but radio broadcast by then had become a proven technology. However, this was not how the customers wanted to consume these services. When you are being entertained with pictures and sound you usually prefer to be seated. But when you want to talk to someone, you frequently are on the go. So due to technical constraints at the time, we ended up using a wired media for an ideally mobile product, and a wireless media for ideally sedentary product. This helps explains the marketing success of both cell phones and cable television.

As service providers, we are still adjusting the wired and wireless mix, and current technology is still influencing that adjustment.

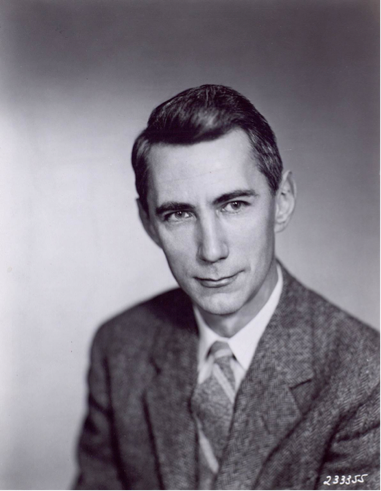

Claude Shannon (1916-2001)

In 1947 Claude Shannon came along with a remarkable paper "Communication in the Presence of Noise" on the data capacity of a communications channel in the presence of random noise. He stated that the data capacity (C, in bits per second) of the channel is proportional to the bandwidth (B, in Hz) of the channel times log2(1 + signal power/noise power).

This brings up the question of what is the constraint on data capacity for any medium? If you want to increase the data capacity of a channel, is the channel lacking bandwidth, or is signal power constrained? The answer varies by medium. In wireless networks generally bandwidth is scarce. The US government sells it by the Hz, and it is very expensive. In fiber optic cable, signal power is usually required to be relatively low. A single mode fiber starts to go nonlinear at only about 10 milliwatts of power, but it has an enormous amount of bandwidth. On coaxial cable both power and bandwidth are constrained, and increased cable loss at high frequencies increases the noise power at higher frequencies. This is also true for telephony twisted pair, although the attenuation of twisted pair is much greater than coax. Cable’s capacity is not as constrained by Shannon’s Capacity Theorem as it is by common wrong assumptions, some of which are:

Assumption

We need to deliver analog TV signals at any frequency that the cable plant carries.

Reality

Not true. There is no requirement for analog TV delivery at 1.8GHz, which is the highest frequency supported in the DOCSIS® 3.1 specification. In fact, the requirement to deliver ANY analog TV signals is disappearing, or is already gone.

Assumption

4096 QAM is four times better than 1024 QAM.

Reality

Not true again. 4096 QAM transports 12 bits per symbol, and 1024 QAM transports 10 bits per symbol. So, 4096 has only 20% more capacity than 1024. But this 20% comes at an enormous price of 400% more required signal power. On the other hand, if you could somehow find 4X more bandwidth, you could use the same 4096 QAM signal power to transmit 400% more data. (Hint: look above 1GHz)

Assumption

In RF line amplifiers, steep up-tilts are required.

Reality

Not true again. This is a carry-over from analog TV delivery, and is no longer required in DOCSIS 3.1 networks. In fact, the optimal way to spend your signal power is explained in Shannon’s classic paper, in particular Fig. 8. It is now referred to as the water-pour method. For the water-pour method, basically, in bands with low noise, it is optimal to use a higher percentage of the available signal power, and in bands with high noise, it is optimal to use lower percentage of the available signal power. Besides, it is just not practical from a signal power standpoint to extend the up-tilt to 1.8GHz for analog TV delivery.

A technical paper on this topic has been published on the CableLabs website.

In conclusion, our networks are going all digital, the old requirements have changed, and there is a lot of additional data capacity available on cable networks. Data capacity can be accessed both by using higher frequencies and reallocating signal power more wisely.

Tom Williams is a Principal Architect at CableLabs.