Wireless

Up Next in Mobile Connectivity: The Technologies That Will Usher in 6G

Key Points

- Three game-changing 6G technologies have the potential to transform future networks: Joint Communication and Sensing (JCAS), Zero or Near-Zero Energy Communication and Artificial Intelligence (AI)/Machine Learning (ML).

- CableLabs is actively involved in 6G research and standards development, participating in various industry organizations to shape the future of wireless ecosystems.

The wireless cellular communication industry has always pushed boundaries. Waves of mobile-network generations — or Gs — have introduced a multitude of features, capabilities and improvements that have led to today’s 5G networks. Although 5G provides substantial improvements and serves multiple modern use cases, the industry is already discussing the key enabling technologies and requirements for 6G, with a target rollout date of 2030.

Building on previous generations’ trends, 6G technology is expected to drive exciting and far-reaching societal shifts in digital economic growth, sustainability, digital equality, trust and quality of life. But what are the technologies that will actually make it happen? This blog post aims to provide some insight.

What Is 6G, and What Will It Require?

A recurring theme among standardization organizations is an attempt to define where 5G ends and 6G begins in terms of offered use cases and associated key enabling technologies. The lines between 5G and 6G couldn’t be any blurrier, thanks to the release of two feature-rich 5G-Advanced releases of, which are intended to serve as a collective bridge to 6G.

The realization of the 6G standard will depend on a collection of key enabling technologies. This collection can be split into two sub-groups:

- The first sub-group will be made up of further enhancements and improvements to 5G and 5G-Advanced capabilities such as mmWave, Advanced MIMO, NR Reduced Capability (RedCap) for low-power communication, and Precious Positioning.

- The second sub-group will include brand-new technologies/capabilities that will be unique to 6G and aren’t currently available in 5G. In particular, we believe that three game-changing technologies have the potential to transform tomorrow’s networks. They are Joint Communication and Sensing (JCAS), Zero or Near-Zero Energy Communication (ZEC) and Artificial Intelligence (AI)/Machine Learning (ML).

Let’s dive deeper into these technologies.

Joint Communication and Sensing

JCAS — also known as Integrated Sensing and Communication (ISAC) — refers to an awareness of the physical environment around a device or base station (BS), achieved by leveraging currently deployed communication waveforms or by introducing new ones that better lend themselves to sensing and communication. Essentially, JCAS aims to map the environment using reflections of communication waveforms to gain additional dimensional awareness, thus reducing dependency on channel measurement operations and eventually dropping the dependency on technologies such as radars and lidars.

JCAS is equivalent to giving “eyes” to a device or BS, where it becomes aware of its relative proximity to physical boundaries that cause signal reflections and refractions to itself and other communication devices. The technology can even replace regular measures such as sensors and cameras for traffic monitoring.

Zero or Near Zero Energy Communication

The wireless industry has always been conscious of energy consumption from a cost and environmental perspective. As a result, it has been pushing hard to achieve energy savings with protocols such as Long-Term Evolution Machine Type Communication (LTE-M), Narrowband Internet of Things (NB-IoT) and NR RedCap, all of which target lower-energy communication.

The 6G standard aims to take those efforts even further. After a signal is decoded, what happens to its energy? Can it be harvested back, similar to the way hybrid cars capture wasted energy from braking?

ZEC — or “Ambient IoT,” as 3GPP refers to it, can benefit smart connected networks and applications where, for example, sensors may be installed in locations that later become inaccessible, making it impossible to charge or replace their batteries. In addition, ZEC can facilitate dropping batteries all together and result in reducing the environmental footprint associated with producing them.

Imagine a drone hovering over a forest or bridge that locates embedded zero-energy sensors, wakes them up using 6G waveforms, commands the sensors to perform their measurements and then communicates their readings. This kind of enabling technology could pave the way to future IoT capabilities!

Given the nature of today’s communication-focused waveform design, new waveforms that lend themselves to both energy transfer and communication are needed in 6G. Moreover, contrary to the current systems, those new protocols need to have minimum overhead to reduce the communication burden on the shortly energized devices.

Artificial Intelligence and Machine Learning

The growing complexity of successive wireless technology generations has made traditional analytical models insufficient for describing system behavior. In addition, the ubiquity of smart devices and AI-empowered applications means that 6G must address the need for hyper-distributed services, intelligent service deployments and semantic communication approaches that facilitate seamless service delivery, efficient resource usage and improved quality of service.

This unprecedented level of complexity requires a paradigm shift in the various approaches to network design, deployment and operations. That’s where AI/ML can help!

AI/ML technologies have increasingly become integral to mobile communications systems and digitalization across various industries. The AI/ML techniques needed in 6G are expected to be on an entirely new level. By embracing a data-driven paradigm, where new AI capabilities are embedded into various network nodes/endpoints and interfaces from the beginning, there’s an opportunity to design 6G with pervasive intelligence capabilities.

Moreover, the adoption of an AI-native air interface — where AI/ML is primarily used to design and optimize the physical and MAC layers instead of model-driven signal processing — promises significant performance improvements and operations efficiencies. In short, AI/ML could act as the backbone for efficiently realizing multiple other key enabling technologies such as ZEC and JCAS.

This new generation of AI/ML is expected to pose multiple challenges for practical deployment. Native AI has an inherent need for robust cooperation between network infrastructure and application layers, in addition to tight integration of computing and communications layers, to eventually provide pervasive end-to-end intelligence for everyone everywhere. Therefore, it’s crucial to evaluate current 3GPP standardization process for specification development, performance evaluation and conformance testing.

Engage With CableLabs in Our 6G Efforts

CableLabs’ Technology Group is actively engaged in standards organizations such as 3GPP, IEEE, NextG Alliance, O-RAN and 6G WinnForum. Our work in these groups targets 6G research, seamless connectivity and convergence.

To get involved in shaping the next generation of wireless ecosystems, their use cases and respective technical solutions, consider participating in one or more of the various activities and industry organizations driving 6G.

Technology Vision

An Inside Look: Revolutionizing Connectivity with Dr. Curtis Knittle

Key Points

- Explore the breadth of innovation taking place in our Wired and Optical Center of Excellence labs — from DOCSIS®️ technologies to passive optical networking and advanced optics.

CableLabs is continuing its march toward achieving ubiquitous, context-aware connectivity that seamlessly integrates into our daily lives. With ever-increasing demands for intelligent and adaptable networks, CableLabs and our member and vendor community are at the forefront of this transformation for the broadband industry.

One way we’re helping drive the future of broadband beyond speed and toward greater reliability is by prioritizing advancements in fiber to the premises (FTTP), DOCSIS technologies, hybrid fiber coax (HFC), passive optical networking, advanced optics and other wireline technologies.

In a new “Inside Look” video from CableLabs, Dr. Curtis Knittle, VP of Wired Technologies, walks through his group’s work to advance CableLabs’ Technology Vision. Focusing on four focus areas outlined within the Tech Vision — Interops & Vendor Ecosystem, Fixed Network Evolution, Specifications & Standards and Advanced Optics — the Wired Technologies team is developing solutions to deliver lightning-fast, symmetrical speeds and increased reliability.

Check out the short video above to learn more about their projects, our state-of-the-art labs and how our members — along with the vendor community — can collaborate with us in this transformative roadmap for the broadband industry.

“It’s their opportunity to define what they believe they will need in the future for their networks,” Knittle says.

Join us!

DOCSIS

DOCSIS 4.0 Interop·Labs: A Year of Progress and Collaboration

Key Points

- The latest DOCSIS® 4.0 Interop·Labs event involved a deeper look at products to showcase the continuing maturity of the DOCSIS 4.0 ecosystem.

- The event examined speed in a new way, verifying long-term high speed through the system — capabilities that can lead to new products and services for consumers.

- Interop events began in July 2023 after the launch of the CableLabs DOCSIS 4.0 Cable Modem Certification program.

Achieving interoperability in the broadband industry is no small feat. It takes time, patience and attention to detail. Collaboration, problem-solving and flexibility. But, once achieved, interoperability powers innovation and competition within the ecosystem. It enables expanded market opportunities for equipment suppliers and offers operators more options for their subscriber services.

CableLabs provides a neutral testing ground for those suppliers and operators to come together and showcase compatibility across interfaces defined in our DOCSIS 4.0 specifications. Since the launch of the CableLabs DOCSIS 4.0 Cable Modem Certification program in June 2023, we’ve spent many action-packed weeks hosting DOCSIS 4.0 Interop·Labs events. And how far we’ve come in just a year! To recap briefly:

- We’ve achieved a downstream speed of over 9 Gbps through a DOCSIS 4.0 modem — a speed record.

- Testing scenarios have focused on combining DOCSIS 4.0 modems and Remote PHY equipment, as well as DOCSIS 3.1 and DOCSIS 4.0 equipment together, to demonstrate flexibility and backward compatibility.

- We’ve explored network reliability — in particular, DOCSIS 4.0 cable modem proactive network maintenance (PNM) functions in DOCSIS 4.0 cable modem termination systems — and DOCSIS 4.0 security technologies.

DOCSIS 4.0 and DAA Technologies

That momentum is continuing with the success of CableLabs and Kyrio’s most recent event, which combined DOCSIS 4.0 technology and Distributed Access Architecture (DAA). Combining interoperability events for these technologies drives home the importance of compatibility across all system components.

During the June 24–27 event, attendees pushed even deeper into testing the products to examine the intricacies of interoperability in a more nuanced way. Thank you to the participants who helped make the event successful — and once again — helped us unlock a new level of productivity.

The featured exercises involved DOCSIS 4.0 cable modems and DAA equipment. The DAA products included Remote MACPHY devices and Remote PHY equipment such as virtualized cores and Remote PHY Devices (RPDs) that support DOCSIS 4.0 technology. All of these systems came together during the week of exercises to demonstrate multi-supplier interoperability across the DOCSIS ecosystem.

Continued High Level of Participation

Attendance remained high with new suppliers and new products. Operators joined us to observe, interact with the suppliers and talk about their own DOCSIS 4.0 network progress.

Among the suppliers were Casa Systems, CommScope and Harmonic, which brought DOCSIS 4.0 cores to the interop. Remote PHY Devices (RPDs) from Casa Systems, CommScope, DCT-DELTA, Harmonic and Vecima Networks offered a mix of DOCSIS 3.1 and DOCSIS 4.0 technologies. DOCSIS 4.0 cable modems were provided by Arcadyan, Askey, Hitron Technologies, MaxLinear, Sagemcom, Sercomm and Ubee Interactive. Calian attended with its Remote PHY test system, which can be used to verify interoperability between DOCSIS 4.0 cable modems and a DOCSIS 4.0 core. Microchip Technology participated with its clock and timing system. Rohde & Schwarz brought its DOCSIS Signal Analyzer for continued development on DOCSIS 4.0 systems.

Testing scenarios involved using a virtual core from one supplier, and multiple RPDs and DOCSIS 4.0 cable modems from various suppliers. The products were mixed and matched to verify interoperability scenarios and speeds through the system. As before, DOCSIS 3.1 and DOCSIS 4.0 equipment were combined to demonstrate the cross-compatibility of existing and new technology. The test equipment suppliers used these setups to verify their solutions.

This interop had a different vibe than past events but proved that DOCSIS 4.0 modems continue to mature, which is enabling new investigations into the technology.

Sustained Speed

Achieving a rate of 9 Gbps (or more) downstream through a DOCSIS 4.0 cable modem is now the new normal. We’ve now achieved it on multiple modems and multiple cores. (And stay tuned. We’re now expecting DOCSIS 4.0 modems that can exceed speeds of 10 Gbps!)

In the July interop, we began looking at sustained speed — that is, the stability of very high-speed traffic over several hours. One test used two modems on a virtual core and RPD and ran 4 Gbps through each modem (8 Gbps through the core) for three hours, with no loss of data. That was over 5 terabytes (TB) of download per modem in 3 hours!

We also tried to find out how fast a system could download 1 TB. By putting 9.2 Gbps through a modem, we reached the 1 TB download in 18 minutes. That’s up to 50 hours of 4K movie content. More importantly, imagine what these speeds could mean for future services like home health care. This rate of high-speed data transfer will no doubt lead to transformative new products and services for the connected home.

Cable Modem PNM Operations

PNM is an important function for cable modems. These proven tools are used by engineers and technicians for maintenance, troubleshooting and improvement of the cable plant. More and more, the signals on the plant are orthogonal frequency division multiplexing (OFDM) and orthogonal frequency division multiple access (OFDMA), which provide higher speeds and capacities than traditional quadrature amplitude modulation (QAM) signals.

At this interop, we looked at five different PNM tests, specifically getting data from DOCSIS 4.0 cable modems and verifying interoperability with the application programming interfaces (APIs) and with the data generated by the modems. This PNM data will enable the most efficient operation of the coaxial cable network, keeping the data levels at their peak by using the more efficient OFDM and OFDMA signals.

Remote PHY Interoperability

While the modems were the focus, this event also included a deeper look at the interoperability between DOCSIS 4.0 cores and RPDs. These will be new infrastructure for operators and another interface around which interoperability must be proven. To facilitate best-in-class infrastructure for new DOCSIS 4.0 products and services, this interface has to demonstrate flexibility to allow configurations and channel plans that work best for an operator.

At the interop, we spent time purposely trying these new configurations. We sought to ensure the components asking for resources and assigning those resources can communicate and allow for flexibility in configuration that can be used in the new future as DOCSIS 4.0 technology moves into the field.

Join Us at SCTE TechExpo

The next DOCSIS 4.0 interop is planned for the week of Aug. 12 at CableLabs’ headquarters in Louisville, Colorado. The event will provide suppliers an opportunity to sharpen their products — and pitches — for the upcoming SCTE®️ TechExpo conference in Atlanta.

At TechExpo, the must-attend event for the broadband industry, CableLabs will highlight the products and efforts that occur at our Interop·Labs events. Full-access passes are free for CableLabs members. Register now to join us!

Events

Stronger Together: Connect at CableLabs ENGAGE Community Forum

Key Points

- ENGAGE, a virtual CableLabs forum, is exclusively for our NDA vendor community and member operators to learn about the latest CableLabs technologies and how to get involved.

- The free event will cover CableLabs' Technology Vision, how generative artificial intelligence can address industry challenges, standards organization involvement, sustainability and more.

Are you a senior technologist, product strategist or involved in planning your company’s technology roadmap? CableLabs’ exclusive ENGAGE virtual forum gathers our NDA vendor community and member operators to hear more about the latest technology developments at CableLabs.

Register now to join us at ENGAGE from 9 am to noon MDT on Wednesday, Aug. 7.

Building a Healthier Ecosystem

As an attendee, you’ll learn how you can more effectively engage with us on CableLabs projects and initiatives, and you’ll take away practical insights to help tailor your own innovation agenda. Together, we can shape the future of the industry.

CableLabs’ recently unveiled Technology Vision is a cornerstone for industry transformation, targeting ubiquitous, context-aware connectivity and an adaptive, intelligent network. It serves as a roadmap for advancing innovation and technology development in the coming years, fostering alignment and supporting unmatched scale for the industry — all to create a healthier, more competitive ecosystem for CableLabs’ operator members and the vendor community.

At the heart of all that we do at CableLabs is collaboration, and our Technology Vision relies on the combined strength and input of our members, the vendor community and other industry stakeholders. At ENGAGE, you'll hear more about how the framework can be leveraged — at an individual, company and industry level — to unlock new opportunities for better, more seamless online experiences and to fuel innovation at scale.

To support our vision, we continue to define standards and specifications to ensure greater compatibility, interoperability and progress for all of us in the ecosystem. Join us at ENGAGE to learn how we can create impact together.

What’s on the ENGAGE Agenda?

Throughout this morning of thoughtfully curated sessions, you will:

- Hear more about CableLabs’ activities in global standards organizations on behalf of the industry.

- Explore how the industry is responding to important and emerging areas, including sustainability/green initiatives and AI.

- Discover new working groups and initiatives that you can contribute to.

- Learn how engaging with CableLabs’ subsidiaries Kyrio and SCTE can drive success for your teams.

You won’t want to miss this opportunity to learn how to join CableLabs and our community of members and vendors on this exciting journey to shape the future of connectivity. Register now for your complimentary pass to this exclusive, virtual event.

Reliability

Reliability Is the Next Step on the Road to Intelligent, Adaptive Networks

Key Points

- Reliability is the new standard for user experiences as we work toward an adaptive era of seamless connectivity.

- DOCSIS®️ 4.0 technology moves us closer to true seamless connectivity and interoperability with technologies capable of higher throughput, lower latency and greater network cohesion.

- As part of our Technology Vision, CableLabs is spearheading technology innovation in areas like proactive network maintenance (PNM), network and service reliability, and optical operations and maintenance.

Reliability is the new standard for customer experiences, outpacing speed as the metric users care about most. According to 2023 consumer research, reliable broadband is the second most important consideration for people considering a home purchase, and a study by CyLab at Carnegie Mellon University found that users want to know how their broadband will perform in less-than-ideal conditions as well as in typical scenarios.

The growing demand for uninterrupted connectivity correlates directly with the proliferation of IoT devices and online activity. As people spend more of their time online for work, social interaction and entertainment, broadband reliability is critical to delivering quality experiences in each of those arenas.

As part of CableLabs’ Technology Vision, we’re fostering collaboration with CableLabs members operators and the vendor community across the industry as we work toward a new era of seamless network reliability. This era will be defined by ubiquitous, context-aware connectivity and intelligent, adaptive networks.

What Do We Mean by Broadband Reliability?

The term “reliability” means different things in different contexts. In terms of broadband, the term encompasses latency, connectivity, availability and resilience. Each new version of DOCSIS® technology has moved us closer to these goals.

With the introduction of DOCSIS 4.0 technology, we’re moving closer to true interoperability with technologies capable of faster speeds, higher throughput, lower latency and greater overall network cohesion. All this assures a more reliable broadband experience!

At CableLabs, we embrace all these concepts and we’re seeking to move the needle on both network reliability and service reliability:

- Network Reliability — The impact of failure — both financially and in terms of user experience — increases exponentially as user activity and network capacity increase. Network reliability aims to reduce the possibility of failure by creating a network that is resilient, available, repairable and maintainable. A reliable network also includes robust redundancy to ensure performance and continuity.

- Service Reliability — From a user perspective, reliability refers to the probability of not experiencing a service disruption. In the pursuit of seamless connectivity, the goal is to create always-on user experiences in which users can transition between networks without experiencing disruption.

Defining a Roadmap Toward Broadband Reliability

Reliability in the broadband industry depends on cultivating a deep understanding of the network’s physical layer while maintaining a digital mindset. Our mission at CableLabs is to advance the way we connect, and we’re continuously working to develop innovative technology applications aimed at achieving reliability goals and improving performance.

These efforts include helping the industry embrace and connect the concepts of broadband reliability and customer experience, working together to establish interoperability and determining what evolving broadband technology means for users at the ground level.

Here are some of the projects we’re most excited about:

Proactive Network Maintenance (PNM)

PNM has transformed the way operators use DOCSIS technology. Its advanced analytics find and correct impairments in the network that, left untreated, will eventually impact service. PNM can help hybrid fiber coax and fiber to the home networks be equally reliable, as PNM ensures dependable connectivity by addressing problems proactively before they lead to service impacts.

PNM innovations will ultimately change the way network operators work with DOCSIS technologies. CableLabs currently has several active PNM workstreams that are seeking to manage the resiliency capacity in the DOCSIS technology network so that users are never impacted by degradation.

With a wealth of network query and test capabilities, we continue to learn how to take full advantage of the information available to us. Development of the science behind operating DOCSIS networks is an important, ongoing effort. Carrying the knowledge into network operations is just as important.

An example of a related technology we’re developing is Generative AI (GAI) tools for network operations. As we learn more about the network and how to maintain it effectively, we need to put that knowledge to use. Through our research and development efforts, we’ve built solutions that turn knowledge into operations.

Quality by Design (QbD)

Another innovation that allows us to take advantage of such information is QbD. QbD tackles network reliability issues head-on by providing network operators direct visibility into the user experience.

The QbD concept, part of CableLabs’ Network as a Service (NaaS) technology, leverages applications’ APIs as network monitoring tools. By enabling applications to share real-time metrics that can be correlated with network performance, QbD allows operators to identify potential network issues and provide automated solutions.

Network and Service Reliability

This working group focuses on aligning service and network reliability and enabling operators to systematize tasks, standardize operations and ensure reliability. A 2022 paper outlines a roadmap to help operators get started on their journey toward addressing network and service reliability.

This working group has extended the roadmap into a more comprehensive set of practices that operators can implement then share their knowledge to develop efficient management of network and service reliability. Through a forthcoming SCTE publication, the working group will be defining practices that help operators ensure broadband networks and services are reliable and continue to improve. As networks transition to new technologies and services expand in their use of communications, this working group will continue to assure that reliable networks and services are built within.

Optical Operations and Maintenance

The optical operations and maintenance working group is focused on aligning KPIs and streamlining operations to simplify the maintenance of optical networks. Participation has been strong, and the group already has agreement on a base architecture. The next step is to identify key telemetry and build solutions that support specific use cases.

Through simplification, we can improve interoperability tools, get new technologies to market faster and streamline networks overall. Overarching goals include reducing complexity, ensuring consistency and improving broadband reliability.

Tools and Readiness

This effort is focused on helping operators plan for DOCSIS 4.0 deployment, avoiding issues that may otherwise occur and improving the reliability of DOCSIS 4.0 technologies. Along with industry partners, CableLabs has released specifications for cable operator preparations for DOCSIS 4.0 technology. SCTE Network Operations Subcommittee Working Group 5 is nearly ready to extend this work into a best practices document that guides operators in preparing to deploy DOCSIS 4.0 technology.

Where Are We Headed?

In the next era of broadband, reliability will improve to the point that networks fade into the background of the user experience.

To achieve this, CableLabs’ Technology Vision defines a framework centered on three key themes: Seamless Connectivity; Network Platform Evolution; and Pervasive Intelligence, Security and Privacy. Within those themes, CableLabs is working with world-class experts to deliver flexible, reliable network solutions that can enable new services and improve user experiences.

How to Get Involved

CableLabs members have several opportunities to collaborate in cultivating the future of broadband technology. Consider attending our next Interop·Labs event, which will include PNM testing of DOCSIS 4.0 cable modems. Or join a working group to help move the needle on finding solutions for industry challenges.

We also invite you to learn more about our Technology Vision themes and focus areas. As we work toward the goal of network reliability and seamless connectivity, industry partnerships and collaboration will unlock the door to the next stage of broadband innovation.

Technology Vision

Beyond Speed: The New Eras of Broadband Innovation

Key Points

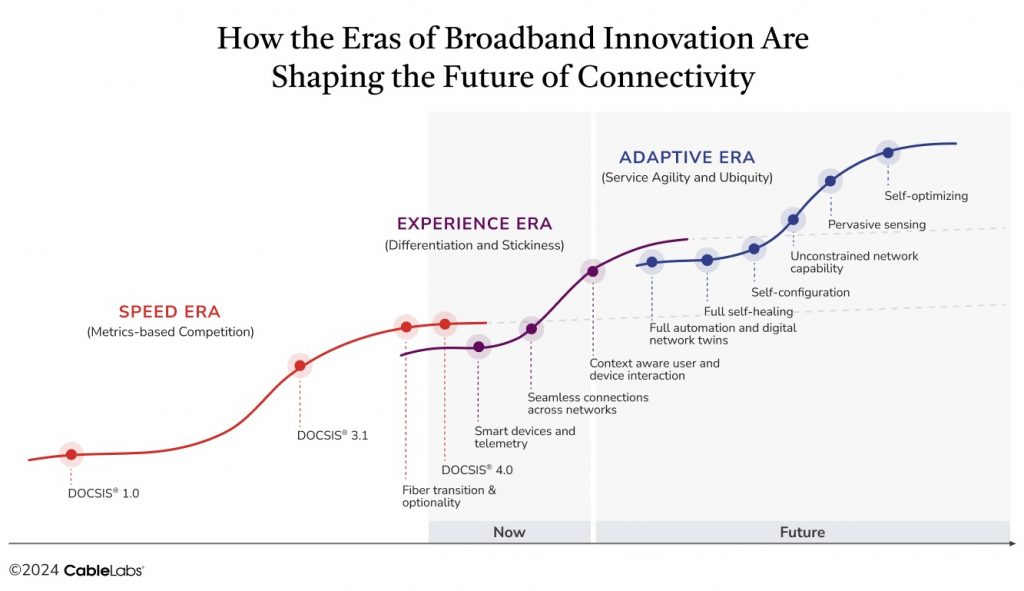

- CableLabs’ Technology Vision outlines three eras of broadband innovation: speed, experience and adaptivity.

- Tailored, seamless and adaptive experiences of the future will require industry collaboration and ecosystem interoperability.

Still trying to attract customers based on ever-increasing gigabit speeds? That may not be enough to gain a competitive advantage in today’s broadband industry. We’re entering a new era — one in which the market prioritizes seamless connectivity over technical metrics.

Users want technology that will work everywhere all the time, with no disruptions. It doesn’t matter to them if an operator can deliver 100 gigabits per second if their connection drops at a pivotal moment.

The broadband industry has reached a tipping point at which additional gains in speed no longer offer substantial improvements to customer experiences that they used to. As quality of service (QoS) has improved over time, user expectations have also evolved. Users spend more and more time online, and they want higher-quality experiences and predictable delivery that won’t glitch or drop as they go about their day, whether they’re at home, on the go or anywhere in between.

In other words, users don’t want to think about their networks. They just want them to work, wherever they happen to be.

To achieve this outcome, the industry needs a network that can anticipate and adapt to users’ needs in real time as they move between networks and applications. Ultimately, the goal is ubiquitous, context-aware connectivity that fades into the background.

How do we get there? First, let’s take a look at the three eras of broadband innovation outlined in CableLabs’ Technology Vision: speed, experience and adaptivity.

Where We Started: The Speed Era

The early days of broadband innovation focused on metrics. Speed was the moving target for DOCSIS®️ 1.0 and DOCSIS 3.1 technologies, and the aim was to improve metrics that were visible to consumers. Companies touted faster and faster speeds as a competitive differentiator, and today we can deliver 10 gigabits per second, with even greater speed increases on the horizon.

While it is foundational to the future of the industry, speed has reached a saturation point. It has ceded its place as the primary driver of usage and is now an expectation, not a differentiator. Faster speeds are certainly likely in the future, but the focus has shifted to delivering explicit value in terms of experience.

Where We Are: The Experience Era

Broadband usage has reached an inflection point, with users requiring such services in nearly every aspect of their lives. They expect their networks to be not only reliable — and reliably fast — but also to transform the way they live, work, learn and play by enabling optimal experiences throughout all of their online interactions. Seamless connectivity has become the new target for the online experience.

Realizing these customer expectations will require advances in smart devices and telemetry, seamless connections across networks, and context-aware user and device interactions. Operators will also need to have a comprehensive understanding of network performance and how it impacts application experiences.

DOCSIS 4.0 technology moves us closer to seamless user experiences with its capability for delivering symmetrical multi-gigabit speeds while supporting high reliability, high security and low latency. Although network technology innovations have expanded user experience measures beyond speed, true seamless connectivity remains elusive.

There’s no one-size-fits-all solution to the seamless connectivity problem. In addition to the performance of their individual networks, providers must also consider:

- How to optimize the hand-off as customers move from one network to another

- How various user applications and devices impact the user experience

- How to meet the increasing demand for tailored experiences that anticipate needs before a disruption occurs

- How to deliver uninterrupted experiences as users move through various environments

Online experiences in an environment such as a hotel, for example, are currently dependent on that hotel’s Wi-Fi. This means the connection could be glitchy or service may be disrupted, leaving customers frustrated and their expectations unmet. The goal in the experience era is to ensure a Wi-Fi experience that matches those expectations no matter where the user is.

The problem is that measuring a user’s actual experience isn’t easy. We have limited intelligent network metrics today that help us predict what the experience will be, and there’s still significant work to be done in this area.

Still, we’ve seen some promising developments in correlating metrics with experiential outcomes.

In recent work conducted by CableLabs, we’ve explored the potential of correlating application Key Performance Indicators (KPIs) to network KPIs. Through our work on Quality by Design (QbD), we can leverage the customer’s lived application experience to identify network impairments and take action on those issues before the user’s experience degrades. This could alleviate frustrating customer experiences and eliminate many unnecessary customer service interactions that result from not understanding the user’s experience in real time.

Where We’re Headed: The Adaptive Era

Ultimately, the emphasis on user experience will segue into an adaptive era that leverages self-configuration, sensing components for understanding environmental factors and predictive algorithms that anticipate future events to ensure uninterrupted experiences. Networks of the future will be smarter, more capable and less visible, essentially fading into the background.

To achieve this result, industry stakeholders will need to work together toward continued increases in capacity, significant enhancements in reliability and network intelligence, and increased network understanding to facilitate proactive responses. Focus areas in the adaptive era will include:

- Full Automation and Digital Network Twins — As more smart components are built into technology, we’ll gain access to telemetry that isn’t on the network today. The data will help us understand network performance in greater detail and build automation into the network.

- Full Self-Healing — The network will identify failures or weak points and automatically re-route data or allocate resources to maintain functionality.

- Self-Configuration — We have some self-configuration technology today, but it’s still fairly primitive. In the adaptive era, learning algorithms will be built in, fully responsive and able to configure themselves to the environment and the way users interact.

- Unconstrained Network Capabilities — Customers will be able to access their services from anywhere with Network as a Service (NaaS). Achieving this goal will require partnerships across providers and networks to create common understanding.

- Pervasive Sensing — The ability to sense fiber components, Wi-Fi, construction and other events will empower predictive adaptability and proactive responses.

- Self-Optimizing — By constantly analyzing performance metrics and user requirements, networks of the future will be able to optimize resource allocation, routing paths and other parameters for best performance.

The infrastructure to create these kinds of experiences is still in the early stages of development. However, we’re starting to see broadband innovations that incorporate adaptive elements. Examples include:

- Automated Profile Management Changes — Built-in mechanisms detect a problem in a section of the network and shift the traffic to a different range inside that frequency.

- Smart Home Technology — Smart home devices, such as thermostats and lighting systems, use sensors to understand environmental factors. They can then adjust settings accordingly, providing a more comfortable and energy-efficient living space.

- Environmental Sensing — Using various sensors and algorithms, networks can sense environmental events (e.g., seismic activity, nearby construction, etc.) and alert first responders or respond to avoid an outage.

What’s Next for the Industry?

Delivering true seamless connectivity — and enabling truly optimal application experiences — won’t happen overnight. To get there, we’ll need to work together as an industry to solve complex system-level issues and develop common platforms for interoperability.

As a whole, the industry must transition from its focus on improving network metrics such as speed. Operators must prioritize delivering tailored, seamless and adaptive experiences built on interoperability. Doing so will require common interfaces to support interoperability, integrated definitions and data structures, as well as collaboration across providers and networks.

The CableLabs Technology Vision seeks to move the industry forward by helping our members provide superior customer experiences everywhere and aligning the industry on a concise model for building adaptive networks through:

- Seamless Connectivity

- Network Platform Evolution

- Pervasive Intelligence, Security and Privacy

To design and implement the technologies and operational models of the future, operators will need to develop a common understanding that transforms the vision of the adaptive era into a reality for every customer. Together, we can deliver network solutions that are flexible, reliable and capable of enabling new services and improved user experiences.

Strategy

Analysis Weighs Upgrade Options for Achieving Symmetric Gigabit Services

Key Points

- DOCSIS 4.0 technology has arrived, giving operators another network upgrade choice to consider.

- CableLabs can provide key analyses to create a full economic picture of different upgrade options, including total-cost-of-ownership modeling based on members’ existing network inputs and upgrade paths.

The era of DOCSIS®️ 4.0 networks has arrived, and the technology is providing a cost-effective upgrade option and competitive choice for cable operators on their path to symmetric multi-gigabit services.

Considering this, CableLabs has conducted a comprehensive analysis of the financial implications associated with deploying and operating different access network technologies. More specifically, our analysis examines the total cost of ownership (TCO) for different upgrade paths for DOCSIS 3.1 low-split on hybrid fiber coax (HFC) access networks — including both DOCSIS over HFC and passive optical networking (PON) over fiber-to-the-home (FTTH) upgrade options.

In short, we find merits for both. The full results of our extensive analysis are outlined in a CableLabs strategy brief, available exclusively for CableLabs members. Members can register for an account here.

A Robust Analysis

It’s a good time for industry operators to consider different upgrade options, but it’s necessary for them to step back and look at the bigger picture when it comes to cost. Of course, it’s not as simple as tallying up the costs of construction and new equipment, or only adding up power and maintenance costs.

Our calculations, which we’ve continually refined over the past several years, include all these considerations and much more, making this analysis as comprehensive as possible — and more thorough than most public estimates.

Using real-world, anonymized data from CableLabs members, our robust calculations create a single metric to effectively compare access network options.

A Flexible Model

Our analysis doesn’t provide a one-size-fits-all answer for operators considering an upgrade, but it can help inform their decisions when combined with financial objectives, existing network structure and the competitive landscape. This model will continue to evolve, and we’ll explore additional cost-related network upgrade issues in future strategy briefs.

Are you a CableLabs member operator curious to know what our analysis would reveal about your company’s upgrade options? We invite you to reach out and let us customize our analysis to your company’s needs. Read our strategy brief, "Economic Comparison of HFC and FTTH Access Networks," and connect with us for a walk-through of our findings.

For more about revenue impacts related to upgrade options, I recommend the Bandwidth Usage, Market Monitor and Discrete Choice Modeling reports — all available to CableLabs members.

Click the button below to register for a CableLabs account and gain access to the strategy brief.

DOCSIS

A Busy DOCSIS 4.0 Interop Event Sets a New Downstream Speed Record

Key Points

- The latest DOCSIS® 4.0 Interop•Labs event involved new suppliers bringing new products, showcasing the depth and breadth of the DOCSIS ecosystem.

- Multi-vendor interoperability testing at the event allowed us to achieve a downstream speed of over 9 Gbps through a DOCSIS 4.0 modem — a new speed record.

- Interops keep getting busier, highlighting the continued importance of interoperability across the industry.

CableLabs and Kyrio hosted a combined DOCSIS 4.0 technology and Distributed Access Architecture (DAA) Interop•Labs event April 15–18 at our headquarters in Louisville, Colorado. Combining the interops for these technologies drives home the fact that interoperability across all the system components is a high priority for the industry.

And once again, the operators and suppliers in attendance made this event the most successful interop to date — and the busiest. We even achieved a downstream speed of over 9 Gbps through a DOCSIS 4.0 cable modem!

At this Interop•Labs event, the focus again was on interoperability. The featured exercises involved DOCSIS 4.0 cable modems and Remote PHY equipment, including virtualized cores and Remote PHY Devices (RPDs) that support DOCSIS 4.0 technology. During the week, the mixing and matching of systems and components demonstrated multi-supplier interoperability across the DOCSIS 4.0 ecosystem.

Record-Setting Number of Devices

The event included new suppliers who brought new products, showcasing the depth and breadth of the DOCSIS ecosystem. We set a record for the highest number of DOCSIS 4.0 cable modems and the most RPDs involved in a single interop! Operators also attended to observe demonstrations, interact with suppliers and talk about their own DOCSIS 4.0 technology plans.

As you can probably guess, it was the busiest interop yet. With all the equipment, suppliers and operators on site, it was standing room only in the interop lab — not a bad problem to have. The sheer number of attendees meant we had to move quickly through test scenarios.

Casa Systems, CommScope and Harmonic brought DOCSIS 4.0 cores to the interop, and Calian, Casa Systems, CommScope, DCT-DELTA, Harmonic and Vecima Networks brought RPDs that offered a mix of DOCSIS 3.1 and DOCSIS 4.0 technologies. Arcadyan, Askey, Hitron Technologies, MaxLinear, Sagemcom, Ubee Interactive and Vantiva showcased DOCSIS 4.0 cable modems. Rohde & Schwarz brought its DOCSIS 4.0 test and measurement system, and Microchip Technology participated with its clock and timing system.

Testing scenarios involved using a virtual core from one supplier, an RPD from another supplier and multiple DOCSIS 4.0 modems from various suppliers. The products were mixed and matched to verify interoperability scenarios and speeds through the system. As before, DOCSIS 3.1 and DOCSIS 4.0 equipment were mixed to demonstrate cross-compatibility of existing and new technology. The test equipment suppliers used these setups to verify their solutions.

An Interop•Labs event combined both DOCSIS 4.0 and Distributed Access Architecture technologies in interoperability testing scenarios.

9 Gbps Downstream Speed Unlocked

Multi-vendor interoperability testing is how we achieved a downstream speed of over 9 Gbps. This speed record shows several things: First, the products are nearing feature-complete status and are now being optimized for the speeds that DOCSIS 4.0 technology will bring to market.

Second, DOCSIS 4.0 technology will compete effectively with 10 gigabit passive optical network (PON) solutions that max out at about 8.5 Gbps downstream. DOCSIS 4.0 technology won’t only surpass that speed but will also provide more throughput to the neighborhood than what is possible with 10 gigabit PON. And lastly, these speeds are available over existing coaxial cable already serving tens of millions of customers around the globe.

It's About Network Cohesion

The number of suppliers and products in the industry continues to rise, and CableLabs’ Interop•Labs events bring all of the components of a DOCSIS 4.0 network solution together. With each interop, we’re successfully mixing and matching products from various suppliers and demonstrating interoperability across the interfaces defined in CableLabs’ specification.

In addition, together we’re achieving the multi-gigabit speeds and other advanced capabilities of DOCSIS 4.0 technology such as security, low latency and proactive network maintenance. Other parts of the DOCSIS 4.0 ecosystem are also becoming available, including hybrid fiber coax (HFC) network equipment such as amplifiers, taps and passives.

It’s exciting work, and we’re always looking forward to the new developments and takeaways that come out of events like these. Catch up on past interops and click the button below to stay up to date on future DOCSIS technology interop events.

Wireless

Wi-Fi: The Unsung Hero of Broadband

Key Points

- More Wi-Fi networks are being deployed to meet the growing need for better broadband connections.

- WFA Passpoint and WBA OpenRoaming have helped expand the public Wi-Fi network footprint.

We hear a lot about 5G/6G mobile and 10G cable these days, but another technology is also helping lead the pack to much less fanfare. It’s a technology that’s as essential as the public utilities you rely on every day. In fact, you’re most likely using it to read this blog post.

Just as you expect your electric, water, sewer or natural gas services to be available without interruption, you likely also depend on your Wi-Fi to be working all the time.

Regardless of the service you use to access the internet (i.e., coax, fiber, wireless, satellite), Wi-Fi is the typical connection to that service. When was the last time you connected a wire to your laptop or had a great experience using your mobile device over the mobile network while inside your home or workplace? The fact is, most of us make use of Wi-Fi when we’re indoors.

Why Wi-Fi? Why Now?

With the introduction of Wi-Fi 6/6E, Wi-Fi 7 and Ultra High Reliability (Future Wi-Fi 8), Wi-Fi is growing to meet and even exceed the growing need for fast, reliable and lower latency broadband connections. It is available basically everywhere you go — work, school, shopping and even while in transit — allowing you to be connected over a high-speed network just about anywhere.

There is an argument that Wi-Fi is not usable or available outside of the home or work. This is rapidly changing as operators, venues, transportation services and even municipalities deploy Wi-Fi networks. It’s true that mobile networks have greater outdoor coverage, but many of the applications in use only require low bandwidth, which is not the case with Wi-Fi.

Evolving Wi-Fi Network Applications

In the car, kids often watch video or play games, which are likely carried over mobile. But now many newer cars on the road have a Wi-Fi hotspot built in. The automotive industry is shifting from mobile connections to Wi-Fi as makers update their onboard software options. Autonomous vehicles and robotics are making use of Wi-Fi, too.

Many mobile carriers are moving to Wi-Fi offload to reduce costs and help meet the demands of broadband traffic. Wi-Fi access points (APs) are now at a price point that almost every home has at least one AP or Wi-Fi extender, with many homes having more than one. In work environments, businesses can quickly deploy a Wi-Fi network on their own or with a third party.

Frameworks for a Wider Wi-Fi Network Footprint

With the addition of WFA Passpoint and WBA OpenRoaming™, the public Wi-Fi network footprint continues to expand. Passpoint allows internet service providers (ISPs) to offer a seamless Wi-Fi connection experience like the mobile connection experience. Passpoint is now included in 3GPP specifications as the named function to assist with mobile devices connecting to Wi-Fi network. OpenRoaming enables access network providers (ANPs) to offer Wi-Fi services to users regardless of their home ISP. This allows providers the ability to offer more locations where subscribers can access Wi-Fi networks. Identity providers (IdPs), such as Google, Samsung and Meta, can also make use of OpenRoaming Wi-Fi network access for their subscribers.

We expect our home utilities to function reliably every day, and now Wi-Fi has become an essential service, supporting our daily activities such as living, learning, working and, of course, playing. For more on Wi-Fi 6/6E, Wi-Fi 7 and Wi-Fi 8, stay up to date here on the CableLabs blog.

Technology Vision

An Inside Look at CableLabs’ New Technology Vision

Key Points

- The Tech Vision aims to drive alignment and scale for the broadband industry and to foster a healthy vendor ecosystem.

- A framework of core areas focuses on defining network capabilities that are flexible, reliable and can deliver quality services and user experiences.

Last month at CableLabs Winter Conference, we unveiled a new way forward for CableLabs’ technology strategy — a transformative roadmap designed to support our member and vendor community through innovation and technology development in the coming years.

Mark Bridges, CableLabs’ Senior Vice President and Chief Technical Officer, recently sat down with David Debrecht, Vice President of Wireless Technologies, to talk more about this new Technology Vision. Check out the video below to learn more about the strategy and how it evolved from conversations with our member CTOs about what’s important to them.

Thinking About Connectivity Differently

The Technology Vision serves as a framework to help align the industry around key industry issues for CableLabs members and operators. By leveraging what CableLabs does best, we can help provide alignment and scale for the industry and direct our efforts toward developing a healthy vendor ecosystem. Involving our members from the get-go has allowed us to refine the Tech Vision framework to focus on areas that will have the most impact for them and give them a competitive edge.

“It’s all about connectivity,” Bridges explains. “People don’t care how they’re connecting. They don’t think about the access network. They don’t think about the underlying technologies like we do. We’re best positioned to be that first point of connection for people.”

The CableLabs Technology Vision defines three future-focused themes — Seamless Connectivity, Network Platform Evolution and Pervasive Intelligence, Security & Privacy — that encompass the scope of broadband technologies. The goal is to provide a concise model for building adaptive networks and set up a framework of core areas focused on defining network capabilities that are flexible, reliable and able to deliver quality services and user experiences.

In the video, Bridges highlights some of the initiatives CableLabs is already deeply involved in to support this vision, including Network as a Service (NaaS), fiber services and mobile innovations designed to move us closer to seamless connectivity. These areas are mainstays in CableLabs’ technology portfolio, allowing us to drive innovation and advancement while keeping costs low.

Creating an Adaptive Network

What’s ahead for the industry in the near future? Bridges points to a “convergence of connectivity” that enables operators to offer seamless services across different network and device types.

As AI evolves and more intelligent devices enter the market, we’re already seeing increases in network usage. These innovations raise critical questions as we look to the future: How will traffic utilization patterns change? What impacts will we see for reliability and capacity needs?

Bridges envisions a network that is more reliable, more capable and, in a sense, less visible. It’s a network, he says, that “fades into the background. You connect and do the things that you want to do and you don’t have to think about it.”

Do you want to be a part of shaping that future? Our working groups bring CableLabs members and vendors together to make meaningful contributions that advance the industry as a whole. Reach out to us to learn how you can participate in this work and help make an impact.