Network as a Service

The Edge Compute Advantage: Turning Broadband Infrastructure Into Intelligence

Key Points

- The broadband industry’s existing infrastructure makes operators uniquely positioned to capitalize on edge computing.

- CableLabs is advancing edge deployment through standardization efforts including CAMARA Edge Cloud APIs, lab demonstrations and our Network as a Service working group.

- We invite interested members of our operator and vendor community to engage in this work by joining the Network as a Service Working Group, which meets next on January 21.

As AI workloads become more distributed and latency-sensitive, the network edge is emerging as a strategic differentiator for broadband operators. With infrastructure that already spans from customer premises equipment to headends and regional data centers, operators are uniquely positioned to host edge compute, including advanced edge AI, in ways that reduce cost, improve performance and unlock new revenue models.

At CableLabs, we've been exploring this evolution through hands-on research, standards engagement and lab-based proofs of concept. Our goal is to help the industry shape what comes next.

Why Edge Compute and Edge Hosting Matter Now

Our recent work demonstrates that many high-value AI workloads perform best when executed closer to where data is generated. Predictive network maintenance, for example, benefits from localized inference to detect RF impairments before customers experience issues. Edge-based video analytics enable real-time visual intelligence while keeping sensitive footage local for privacy and compliance.

These use cases are detailed in our Edge LLM Hosting paper and reinforce a central insight: the cable footprint is already an edge cloud, ready to be activated for modern applications.

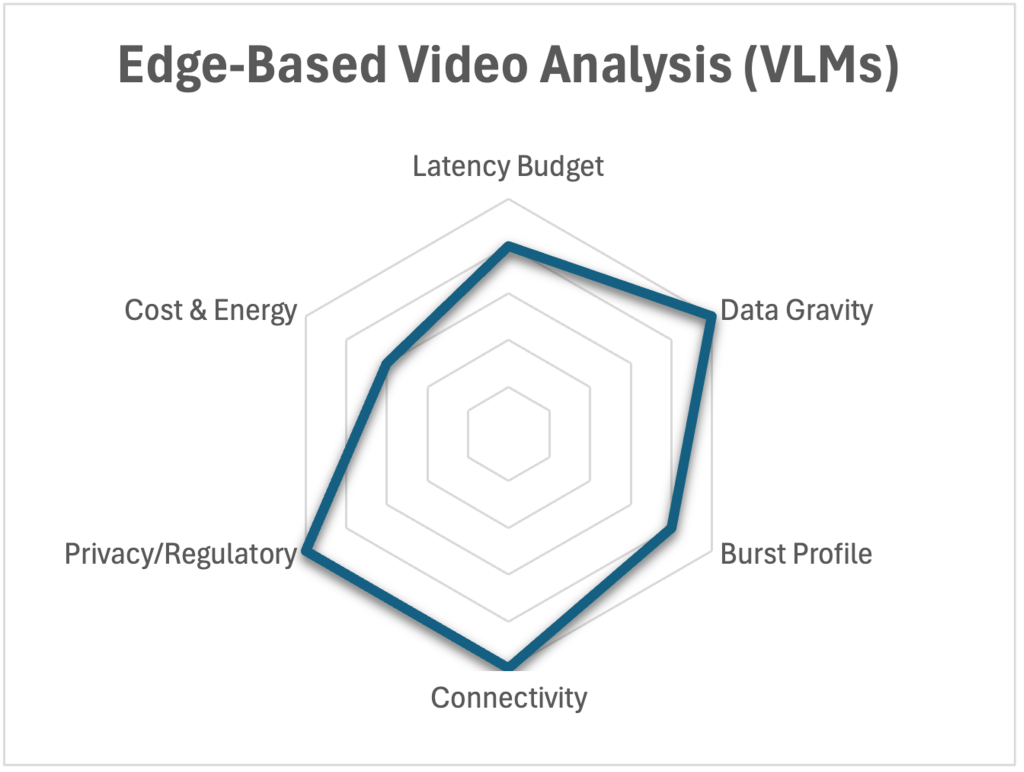

Not every AI workload belongs at the edge. We're developing selection criteria to help operators evaluate where to place different workloads. The radar chart below shows how edge-based video analysis scores across these dimensions.

If you missed our SCTE TechExpo session on operationalizing edge-hosted AI, the recording is available on-demand.

CableLabs’ work in edge compute represents a critical component of the Technology Vision for the industry, specifically within the Platform Evolution vector. By building adaptable platforms through cloud-based virtualization, edge computing and converged provisioning systems, broadband operators gain the flexibility to deliver and enable new network services and applications that meet evolving customer needs.

Advancing Standardization and Real-World Deployments

To accelerate industry adoption, CableLabs continues contributing to the CAMARA Edge Cloud API work, providing a unified way for developers to discover operator edge capabilities and deploy edge applications and inference workloads to them. As operators evaluate how to expose compute resources, the Compute Services APIs offer a relevant reference point for shaping future offerings.

We've paired these standardization efforts with real implementations in CableLabs' 10G Lab, including demonstrations of portable AI workloads and CAMARA-aligned Edge Cloud APIs. Our members continue advancing production-readiness by virtualizing major network functions such as the CCAP/CMTS and CDN infrastructure. These are foundational steps toward flexible, cost-efficient compute services delivered directly from the operator network.

Partnering Through NaaS on Edge Compute

Our Network as a Service (NaaS) program brings these technical threads together. As operators consider how to productize edge compute, standardize APIs and scale AI workloads, CableLabs is expanding discussions within the NaaS Working Group. Another Technology Vision priority, NaaS helps advance the Differentiated Services vector, focusing on context-aware services that enable seamless user experiences and introduce new revenue opportunities for operators.

We're seeking member and vendor input on priorities ranging from monetizing low-latency compute to enabling partner ecosystems to enhancing operational efficiency through edge-deployed AI. We're also working on solutions for operators planning infrastructure refreshes and want to ensure their upgrades support emerging edge AI workloads.

Join the Conversation

Join us for the next NaaS monthly update on Wednesday, January 21. We'll share the latest progress, showcase demonstrations including our CAMARA Edge Cloud API work, and engage directly with members and vendors who are shaping the roadmap.

If you’re interested in this work, stay up to date by joining the working group, which is open to CableLabs member and vendor communities. Meeting details are available when you join the group.

Want to dive deeper into edge computing? Make plans to join us at CableLabs Tech Summit 2026 — April 27–29 in Westminster, Colorado. Tech Summit is an exclusive networking and knowledge-sharing event for our member operators and exhibiting vendor community, building upon the successful format of its predecessor, CableLabs Winter Conference.

In the session “Revolutionizing Customer Experience with Intelligence at the Edge,” we’ll explore what it really takes to deploy an edge application using open-source infrastructure. Register soon to join the conversation. When you complete your registration by January 31, you’ll receive an exclusive CableLabs YETI tumbler when you check in for the conference.

Have questions or want to learn more about edge computing? Contact me to discuss how this future-forward approach can fit into your network strategy.

Convergence

Revolutionizing Telecommunications with Intent-Based Quality on Demand APIs

In today’s digitally connected world, where everyone expects high-speed and reliable connections for their data-intensive activities, quality of service (QoS) plays the starring role. Think of QoS as the traffic cop of the digital highway, ensuring that data gets where it needs to go smoothly and quickly. For service providers, QoS is more than just traffic management. It’s a way to ensure customer satisfaction, differentiate their services and ultimately succeed in the ever-competitive telecommunications market.

To easily expose these QoS capabilities to customers and third-party developers, CableLabs is working with CAMARA to publish a set of intent-based APIs. CAMARA is an open-source project hosted by the Linux Foundation, with active participation from service providers, third-party developers, hyperscalers and a diverse vendor ecosystem. GSMA is standardizing many of the CAMARA APIs as a part of its Open Gateway initiative, providing a path to market and adoption for these services.

This work was an open collaboration with CAMARA and the participating companies as they continue to embrace wireline technologies. The CAMARA community provides an easy path for operators and developers to contribute and consume network APIs. More engagement from wireline operators will drive this further.

The Game-Changing Extension

Before we delve into the details of intent-based APIs and CAMARA, I’d like to highlight CableLabs’ recent contribution and demonstrate why we think it’s an important milestone in the continuous network transformation that our members are undergoing.

As you may be aware, CableLabs’ member companies frequently have fiber and mobile offerings in addition to their DOCSIS® networks. Each of these networks has a variety of mechanisms for managing QoS settings and establishing Quality on Demand (QoD) sessions. Although we want to expose these capabilities to end users, we don’t want to expose the added complexity. The changes we’re introducing allow for virtually any QoS profile to be defined using a common set of attributes that will work across both wireless and wireline networks. Before our QoS profile contribution, only four predefined profiles were available, and they didn’t map well to wireline offerings.

The initial profiles were also based on general guidance and didn’t provide a specific set of capabilities. This isn’t a criticism of the initial work. It was a great start, and these APIs are still in an alpha release. That means that the APIs are still in the early phases of development, and we expect additional changes before a stable release happens.

Details of the CAMARA Quality on Demand API

The CAMARA Quality on Demand API is available here. The purpose of this API is to dynamically manage QoD sessions for specific devices or traffic flows.

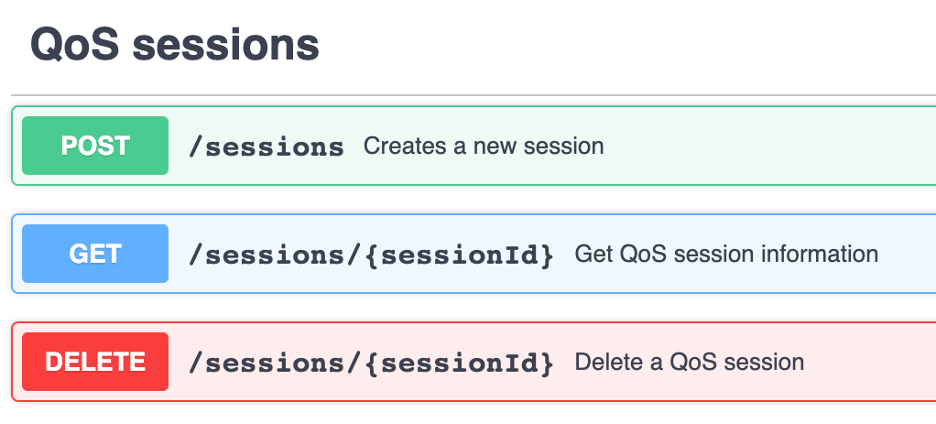

Readers who are familiar with developing web services will be familiar with the diagram below, showing the three methods for managing a QoS session. The methods are as follows:

- Create session (POST)—Requests that the service provider establish a new QoS session based on the device and subscriber identification information and the unique name for the QoS profile for a given duration.

- Get session (GET)—Retrieves the information about a session based on its session ID.

- Delete session (DELETE)—Terminates a session before it has expired.

In addition to defining the calls above, the API defines all the required and optional attributes associated with each call. This is all written using OpenAPI version 3.0.3. The benefit of using OpenAPI is that there are dozens, if not hundreds, of tools out there to generate code based on OpenAPI descriptions.

Details About QoS Profile Attributes

These new QoS attributes are intended to describe most QoS profiles across a wide variety of wireless and wireline networks. Each of the network types describes quality of service in the exact same way. The CAMARA QoS Profile has distilled these settings into a common set of attributes that can be applied across a broad set of access networks. Since these attributes are defined by the services provider, the provider can choose a subset of these capabilities to expose. It’s important to read the definitions of these attributes in the CAMARA Quality on Demand API before you use them since these definitions may vary slightly from a specific access network implementation.

We’ve added 15 attributes for QoS profiles. Don’t let that overwhelm you; only the name and status are required. The list of attributes is here, with detailed descriptions provided in the QoD API specification.

- name

- description

- status

- targetMinUpstreamRate

- maxUpstreamRate

- maxUpstreamBurstRate

- targetMinDownstreamRate

- maxDownstreamRate

- maxDownstreamBurstRate

- minDuration

- maxDuration

- priority

- packetDelayBudget

- jitter

- packetErrorLossRate

Benefits of Open Source

One of the great advantages of this project’s open-source nature is that these APIs are available to anyone to try. There’s no need to wait for a stable release or an official product to kick the tires on these APIs. Anyone can download them today and use give them a try.

In fact, that’s how CableLabs started working with CAMARA. As part of a “Speed Boost” proof of concept to highlight the convergence architecture, we needed an API that provided many of the capabilities that CAMARA offers. The initial “Speed Boost” used the version of the CAMARA Quality on Demand API that was available at the time. We’ll expand on this more during SCTE Cable-Tec Expo ’23 in October.

Through our work with the APIs, we found opportunities to make improvements. The CAMARA community was very welcoming to and supportive of our contribution. These changes have now been merged into the 0.9.0 release.

Next Steps

We’re already working on extensions to the Quality on Demand API to allow for a richer set of query capabilities to find the QoS profile that meets individual needs. Please provide feedback and support for these features here and here.

Stay tuned for more updates about CAMARA, the GSMA Open Gateway initiative and CableLabs’ continued contributions to the telecommunications sector. These entities are working together to shape the future of telecommunications, and it’s an exciting time to watch this transformation unfold.

Convergence

Up Ahead: Better Connections, Happier Users and Lower Costs

Whether at home, in the office, at a coffee shop or on the go, we all expect a dependable internet connection. Even when we're offered bundled services that combine wireless and wireline network access, uninterrupted connectivity often isn't as straightforward as it sounds. Simply providing connectivity over multiple access network types isn't sufficient for meeting customers’ expectations, and managing these as independent networks drives operational costs and complexity.

Wireless-wireline convergence (WWC) solves these problems for consumers and operators. WWC—or fixed-mobile convergence, as it’s sometimes called—enables ubiquitous connectivity that works seamlessly, meeting customers’ needs and simplifying network operations.

CableLabs is working with member and vendor communities to make this a reality. We’ve recently published a white paper that lays out the roadmap to WWC.

How Wireless-Wireline Convergence Works

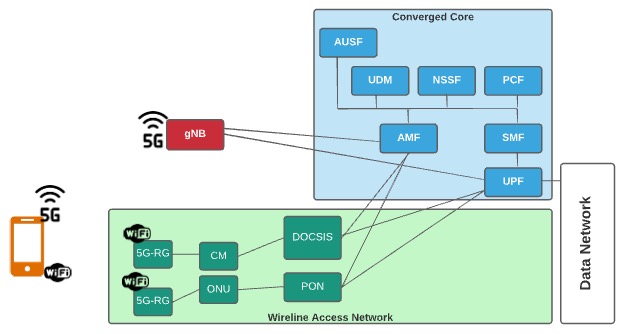

The WWC architecture simplifies networks by eliminating redundant capabilities that exist across wireline and wireless access networks and enabling seamless operations across access technologies. These redundancies, which include subscriber databases, routing function and security capabilities, are converged under the WWC architecture.

At the heart of the WWC architecture is a single converged core based on the 3GPP 5G mobile core. The mobile core was selected as the foundation for the converged core over wireline cores since mobile cores support devices moving across access networks.

Under the WWC architecture, a single converged core will enable seamless wireless access across Wi-Fi and cellular networks.

When Will Network Convergence Become a Reality?

We’d all like to snap our fingers and begin reaping the benefits of a fully converged network tomorrow. However, because of a substantial installed base, as well as gaps in current products, standards and specifications, this is going to be a journey. Fortunately, the foundation for WWC is already being delivered with help from current technologies.

CableLabs’ member and vendor collaboration will help resolve the gaps in converging DOCSIS® networks, passive optical networking (PON) and Wi-Fi with mobile networks. Existing CableLabs and other industry specifications will be updated and extended, and new specs will be developed.

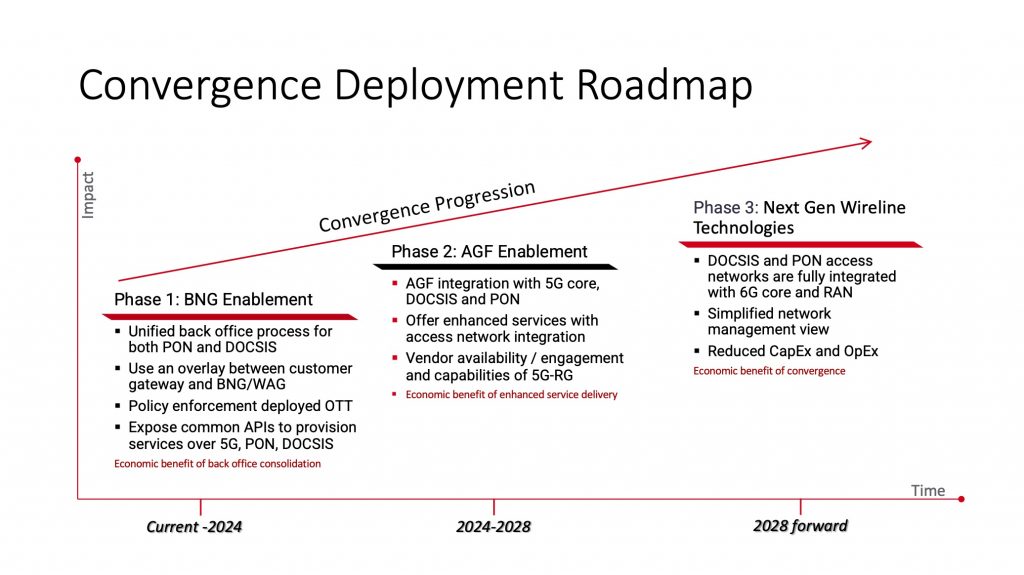

We’ve mapped out the WWC roadmap into three general phases—starting by connecting what is deployed today and progressing towards fully converged networks.

The roadmap evolves toward a complete integration of wireline and wireless networks, beginning within the decade.

To go deeper and learn more about WWC, the phases of the deployment roadmap and what fixed-mobile convergence means for our end-user experience, check out the new white paper.

Security

Transparent Security Outperforms Traditional DDoS Solution in Lab Trial

Transparent Security is an open-source solution for identifying and mitigating distributed denial of service (DDoS) attacks and the devices (e.g., Internet of Things [IoT] sensors) that are the source of those attacks. Transparent Security is enabled through a programmable data plane (e.g., “P4”-based) and uses in-band network telemetry (INT) technology for device identification and mitigation, blocking attack traffic where it originates on the operator’s network.

Cox Communications and CableLabs conducted a proof-of-concept test of the Transparent Security solution in the Cox lab in late 2020. Testing was primarily focused on the following major objectives:

- Compare and contrast performance of the Transparent Security solution against that of a leading commercially available DDoS mitigation solution.

- Validate that INT-encapsulated packets can be transported across an IPv4/IPv6/Multiprotocol Label Switching (MPLS) network without any adverse impact to network performance.

- Validate that the Transparent Security solution can be readily implemented on commercially available programmable switches.

This trial compared the effectiveness of Transparent Security with that of a leading DDoS mitigation solution. Transparent Security was able to identify and mitigate attacks in one second as compared with one minute for the leading vendor. We also validated that inserting and removing the INT header had no observable impact on throughput or latency.

The History and Updates of Transparent Security

We initially released the Transparent Security architecture and open-source reference implementation in October 2019. Since then, we’ve achieved several milestones:

- We added source-only metadata to the P4 in-band telemetry specification, along with Transparent Security as an example implementation.

- We added support to collate multiple packet headers in a single telemetry report.

- We released a document titled “Transparent Security: Personal Data Privacy Considerations.”

- We created a Transparent Security landing page.

Why Cox Is Interested

As the proliferation of IoT devices continues to increase, the number of devices that can be compromised and used to participate in DDoS attacks also increases. At the same time, the frequency of DDoS attacks continues to grow because of the widespread availability of DDoS for-hire sites that allow individuals to launch DDoS attacks for relatively little cost. These factors contribute to a trend of malicious traffic increasingly using upstream bandwidth on the access network.

Although currently available DDoS mitigation solutions can monitor for outbound attacks, they’re primarily focused on mitigating DDoS attacks directed at endpoints on the operator’s network. These solutions use techniques such as BGP diversion and Flowspec to drop traffic as it comes into the network. However, mitigating outbound attacks using these techniques aren’t effective because the malicious traffic will have already traversed the access network, where it has the greatest negative impact before the traffic can be diverted to a scrubber or dropped by a Flowspec rule.

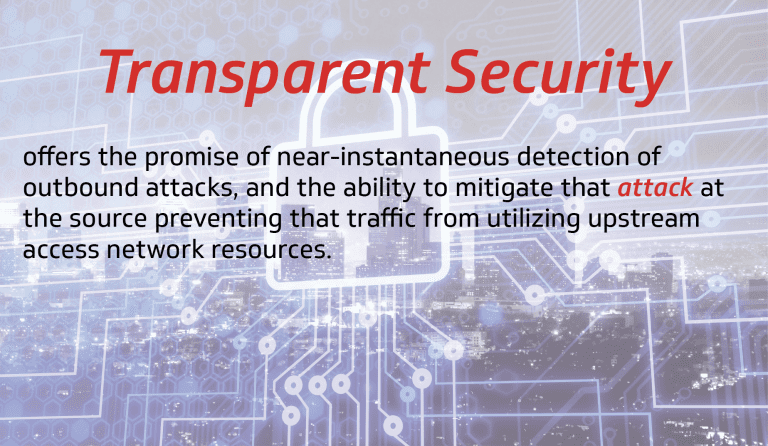

Transparent Security offers the promise of near-instantaneous detection of outbound attacks, as well as the ability to mitigate that attack at the source, on the customer premises equipment (CPE), thereby preventing that traffic from using upstream access network resources.

In addition to Transparent Security’s DDoS mitigation capabilities, there are additional benefits to network performance/visibility in general. Implementation of Transparent Security on the CPE means that network operators can derive the specific device type associated with a given flow. This allows the operator to determine the type of IoT devices being leveraged in the attack.

This also opens myriad other possibilities—for example, reducing truck rolls by enabling customer service personnel to determine that a customer’s issue is with one specific device versus all the devices on the internal network. Another example would be the capability to track the path a given packet followed through the network by examining the INT metadata.

Consumers will see a direct benefit from Transparent Security. Once compromised devices are identified, the consumer can be notified to resolve the issue or, alternatively, rules can be pushed to the CPE to isolate that device from the internet while allowing the consumer’s other devices continued access. Such isolation mitigates the additional harm coming from compromised devices. This additional harm can take the form of degraded performance, exfiltration of private data, breaks in presumed confidentiality in communications, as well as the traffic consumed through DDoS. Less malicious traffic on the network provides for a better overall customer experience.

Lab Trial Setup

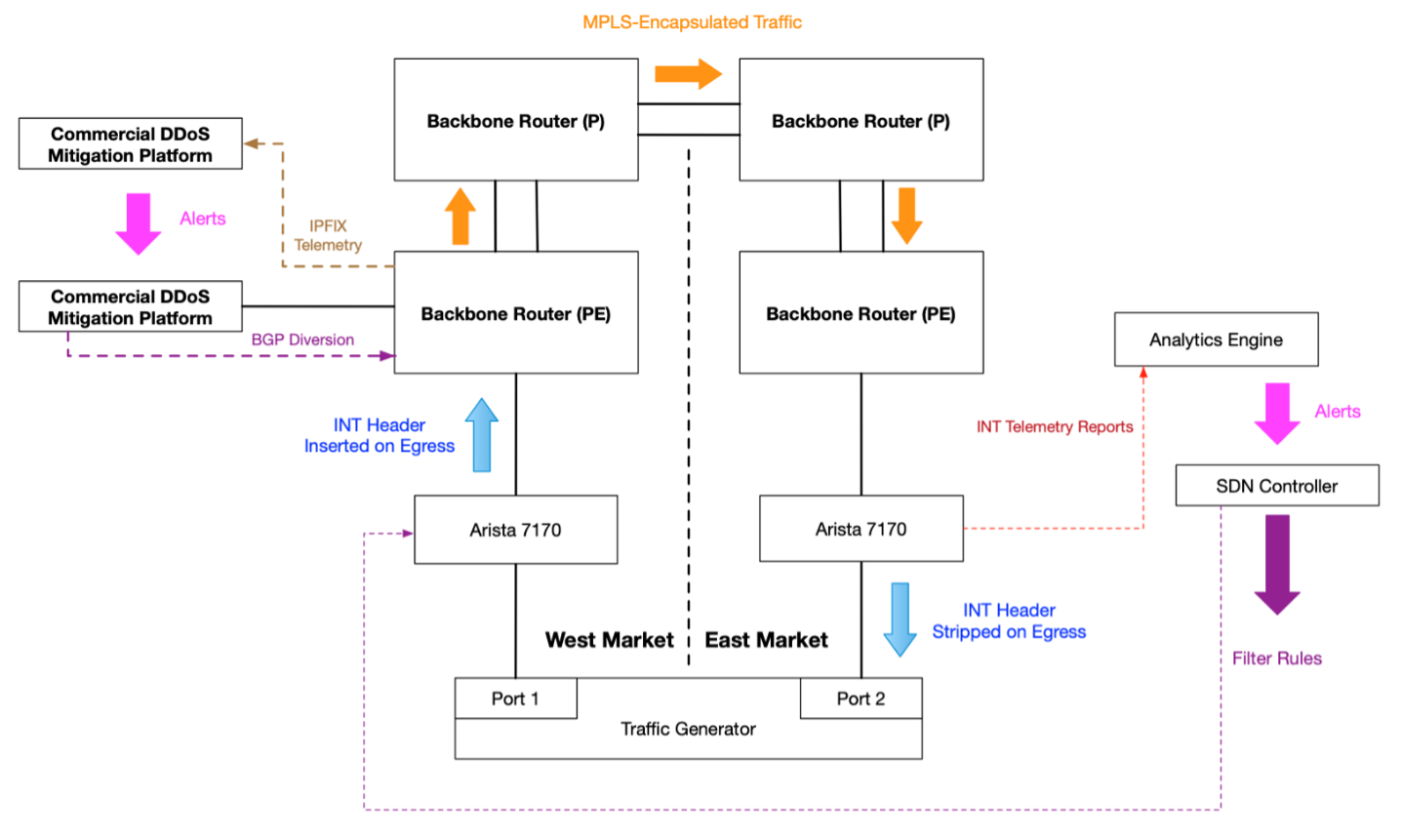

The test environment was designed to simulate traffic originating from the access network, carried over the service provider’s core backbone network, and targeting another endpoint on the service provider’s access network in a different market (e.g., an “east-to-west” or “west-to-east" attack).

The following diagram provides a high-level overview of the lab test environment:

In the lab trial, various types of DDoS traffic (UDP/TCP over IPv4/IPV6) were generated by the traffic generator and sent to the West Market Arista switch, which used a custom P4 profile to insert an INT header and metadata before sending the traffic to the West Market PE router. The traffic then traversed an MPLS label-switched path (LSP) to the East Market PE router, before being sent to the East Market Arista, which used a custom P4 profile to generate INT telemetry reports and to strip the INT headers before sending the original IPv4/IPv6 packet back to the traffic generator.

Results

When comparing and contrasting the performance of the Transparent Security solution against that of a leading commercially available DDoS mitigation solution, the lab test results were very promising. Detection of outbound attacks was rapid, taking approximately one second, and Transparent Security deployed the mitigation in five seconds. The commercial solution took 80 seconds to detect and mitigate the attack. These tests were run with randomized UDP floods; UDP reflection and TCP state exhaustion attacks were identified and mitigated by both solutions. In this trial, only packets related to the attack were dropped. Packets not related to the attack were not dropped.

The Transparent Security solution was implemented on commercially available programmable switches provided by Arista. These switches are being deployed in networks today. No changes to the Networking Operations System (NOS) were required to implement Transparent Security.

The tests validated that INT-encapsulated packets can be transported across an IPv4/IPv6/MPLS network without any adverse impact. There was no observable impact to throughput when adding INT headers, generating telemetry reports or mitigating the DDoS attacks. We validated that the traffic ran at line speed, with the INT headers increasing the packet size by an average 2.4 percent.

Application response time showed no variance with or without enabling Transparent Security. This suggests that there will be no measurable impact to customer traffic when the solution is deployed in a production network.

Conclusion and Next Steps

Transparent Security uses in-band telemetry to help identify the source of the DDoS attack.

This trial focused on using Transparent Security on switches inside the service provider’s network. For the full impact of Transparent Security to be realized, its reach needs to be extended to gateways on the customer premises. Such a configuration can mitigate an attack before it uses any network bandwidth outside of the home and will help identify the exact device that is participating in the attack.

This testing took place on a custom P4 profile based on our open-source reference implementation. We would encourage vendors to add INT support to their devices and operators to deploy programmable switches and INT-enabled CPEs.

Take the opportunity today to explore the opportunities for using INT and Transparent Security to solve problems and improve traffic visibility across your network.

Virtualization

Give your Edge an Adrenaline Boost: Using Kubernetes to Orchestrate FPGAs and GPU

Over the past year, we’ve been experimenting with field-programmable gate arrays (FPGAs) and graphics processing units (GPUs) to improve edge compute performance and reduce the overall cost of edge deployments.

Unless you’ve been under a rock for the past 2 years, you’ve heard all the excitement about edge computing. For the uninitiated, edge computing allows for applications that previously required special hardware to be on customer premises to run on systems located near customers. These workloads require either very low latency or very high bandwidth, which means they don’t do well in the cloud. With many of these low-latency applications, microseconds matter. At CableLabs, we’ve been defining a reference architecture and adapting Kubernetes to better meet the low-latency needs of edge computing workloads.

CableLabs engineer Omkar Dharmadhikari wrote a blog post in May 2019 called Moving Beyond Cloud Computing to Edge Computing, outlining many of the opportunities for edge computing. If you aren’t familiar with the benefits of edge computing, I’d suggest reading that post before you read further.

New Features

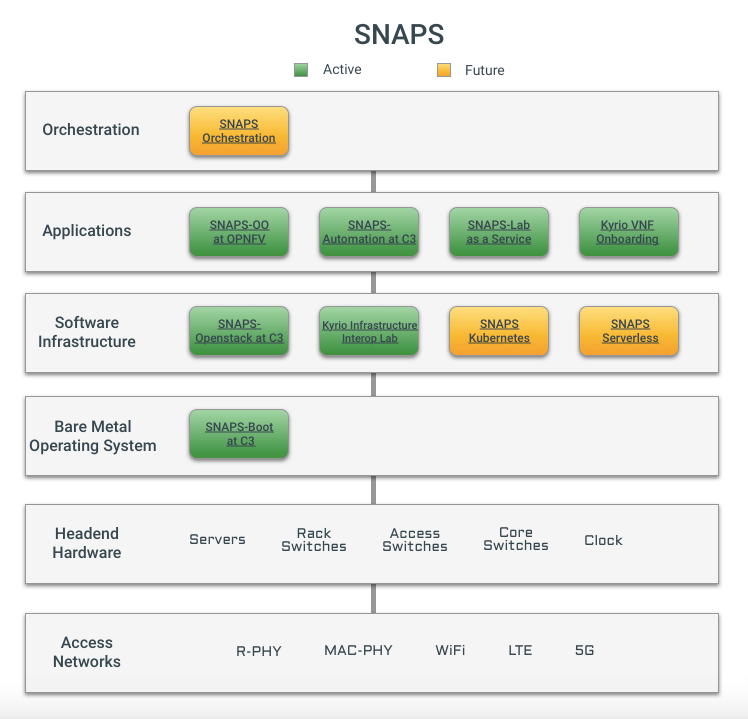

As part of our efforts around Project Adrenaline, we’ve shared tools to ease the management of hardware accelerators in Kubernetes. These tools are available in the SNAPS-Kubernetes GitHub repository.

- Field-programmable gate array (FPGA) accelerator integration

- Graphics processing unit (GPU) accelerator integration

Hardware Acceleration

FPGAs and GPUs can be used as hardware accelerators. There are three advantages that we consider when moving a workload to an accelerator:

- Time requirements

- Power requirements

- Space requirements

Time, space and power are all critical for edge deployments. You have limited space and power for each location. The time needed to complete the operation must fall within the desired limits, and certain operations can be much faster running on an accelerator than on a CPU.

Writing applications for accelerators can be more difficult because there are fewer language options than general-purpose CPUs have. Frameworks such as OpenCL attempt to bridge this gap and allow a single program to work on CPUs, GPUs and FPGAs. Unfortunately, this interoperability comes with a performance cost that makes these frameworks a poor choice for certain edge workloads. The good news is that several major accelerator hardware manufacturers are targeting the edge, releasing frameworks and pre-built libraries that will bridge this performance gap over time.

Although we don’t have any hard-and-fast rules today for what workloads should be accelerated and on which platform, we have some general guidelines. Integer (whole number) operations are typically faster on a general-purpose CPU. Floating point (decimal number) are typically faster on GPUs. Bitwise operations, manipulating ones and zeros, are typically faster on FPGAs.

Another thing to keep in mind when deciding where to deploy a workload is the cost of transitioning that workload from one compute platform to another. There’s a penalty for every memory copy, even within the same server. This means that running consecutive tasks within a pipeline on one platform can be faster than running each task on the platform that is best for that task.

Accelerator Installation Challenges

When you use accelerators such as FPGAs and GPUs, managing the low-level software (drivers) to run them can be a challenge. Additional hooks to install these drivers during the OS deployment have been added to SNAPS-Boot, including examples for installing drivers for some accelerators. We encourage you to share your experiences and help us add support for a broader set of accelerators.

Co-Innovation

These features were developed in a co-innovation partnership with Altran. We jointly developed the software and collaborated on the proof of concepts. You can discover more about our co-innovation program on our website, which includes information about how to contact CableLabs with a co-innovation opportunity.

Extending Project Adrenaline

Project Adrenaline only scratches the surface of what’s possible with accelerated edge computing. The uses for edge compute are vast and rapidly evolving. As you plan your edge strategy, be sure to include the capability to manage programmable accelerators and reduce your dependence on single-purpose ASICs. Deploying redundant and flexible platforms is a great way to reduce the time and expense of managing components at thousands or even millions of edge locations.

As part of Project Adrenaline, SNAPS-Kubernetes ties together all these components to make it easy to try in your lab. With the continuing certification of SNAPS-Kubernetes, we’re staying current with releases of Kubernetes as they stabilize. SNAPS-Boot has additional features to easily prepare your servers for Kubernetes. As always, you can find the latest information about SNAPS on the CableLabs SNAPS page.

Contact Randy to get your adrenaline fix at Mobile World Congress in Barcelona, February 24-27 2020.

Security

Vaccinate Your Network to Prevent the Spread of DDoS Attacks

CableLabs has developed a method to mitigate Distributed Denial of Service (DDoS) attacks at the source, before they become a problem. By blocking these devices at the source, service providers can help customers identify and fix compromised devices on their network.

DDoS Is a Growing Threat

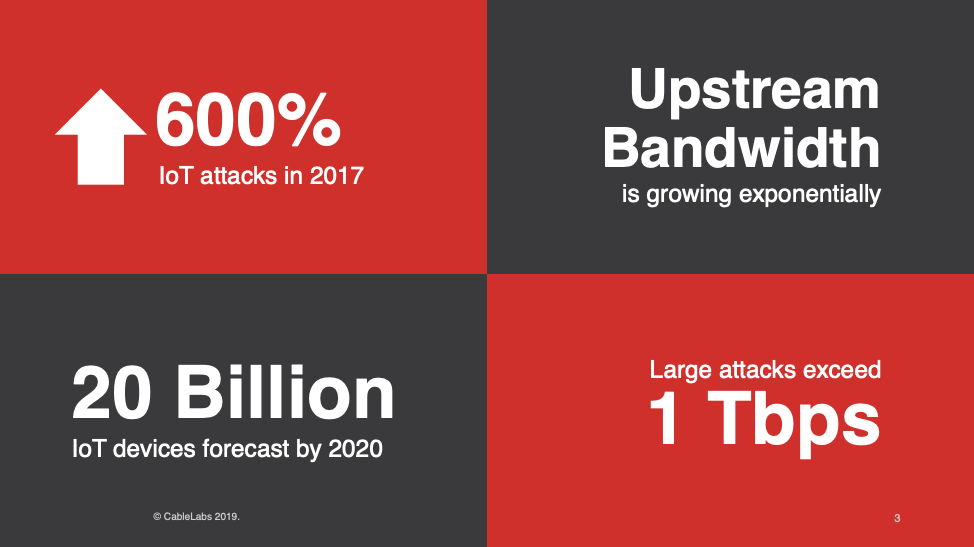

DDoS attacks and other cyberattacks cost operators billions of dollars, and the impact of these attacks continues to grow in size and scale, with some exceeding 1 Tbps. The number of Internet of Things (IoT) devices also continues to grow rapidly, many have poor security, and upstream bandwidth is ever increasing; this perfect storm has led to exponential increases in IoT attacks, by over 600 percent between 2016 and 2017 alone. With an estimated increase in the number of IoT devices from 5 billion in 2016 to more than 20 billion in 2020, we can expect the number of attacks to continue this upward trend.

As applications and services are moved to the cloud and the reliance on connected devices grows, the impact of DDoS attacks can continue to worsen.

Enabled by the Programmable Data Plane

Don’t despair! New technology brings new solutions. Instead of mitigating a DDoS attack at the target, where it’s at full strength, we can stop the attack at the source. With the use of P4, a programing language designed for managing traffic on the network, the functionality of switches and routers can be updated to provide capabilities that aren’t available in current switches. By coupling P4 programs with ASICs built to run these programs at high speed, we can do this without sacrificing network performance.

As service providers update their networks with customizable switches and edge compute capabilities, they can roll out these new features with a software update.

Comparison Against Traditional DDoS Mitigation Solutions

| Feature | Transparent Security | Typical DDoS solution |

| Mitigates ingress traffic | X | X |

| Mitigates egress traffic | X | |

| Deployed at network peering points | X | X |

| Deployed at hub/head end | X | |

| Deployed at customer premises | X | |

| Requires specialized hardware | X | |

| Mitigates with white box switches | X | |

| Works with customer gateways | X | |

| Identifies attacking device | X | |

| Time to mitigate attack | Seconds | Minutes |

| Packet header sample rate | 100% | < 0.1% |

Transparent Security can mitigate ingress and egress traffic at every point in the network, from the customer premises to the core of the network. To mitigate ingress attacks, typical DDoS mitigation solutions are deployed only at the edge of the network. This means that they don’t protect the network from internal DDoS attacks and can allow their networks to be weaponized.

Transparent Security runs on white box switches and software at the gateway. This provides a wide variety of vendor options and is compatible with open standards, such as P4. Typical solutions frequently rely on the purchase of specialized hardware called scrubbers. It isn’t feasible to deploy these at the customer premises. Finally, Transparent Security can look at the header for every egress packet to quickly identify attacks originating on the service providers network. Typical solutions sample only 1 in 5,000 packets.

Just the Beginning

Transparent Security is just the beginning, and one of many solutions that can be deployed to improve broadband services. Through the programmable data plane, network management will become vastly smarter, and new services will benefit, from Micronets to firewall and managed router as a service.

Join the Project

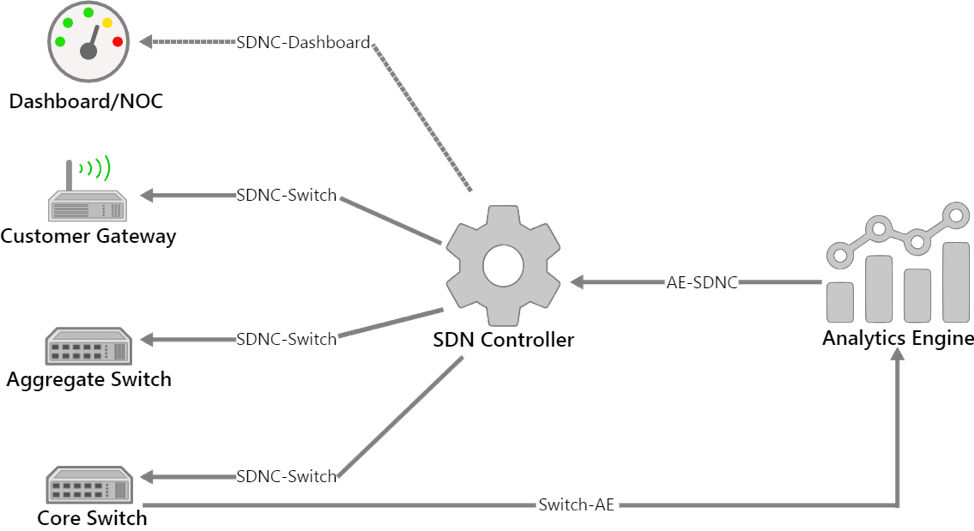

CableLabs is engaging members and vendors to define the interfaces between the transparent security components. This should create an interoperable solution with a broad vendor ecosystem. The SDNC-Dashboard, AE-SDNC, SDNC-Switch and Switch-AE interfaces in the diagram below have been identified for the initial iteration. Section 6 of the white paper describes these interfaces in detail.

The Transparent Security architecture and interface definitions will expand over time to support additional use cases. These interfaces leverage existing industry standards when possible.

You can see see related projects here. You can find out more information on 10G and security here.

Virtualization

CableLabs Announces SNAPS-Kubernetes

Today, I’m pleased to announce the availability of SNAPS-Kubernetes. The latest in CableLabs’ portfolio of open source projects to accelerate the adoption of Network Functions Virtualization (NFV), SNAPS-Kubernetes provides easy-to-install infrastructure software for lab and development projects. SNAPS-Kubernetes was developed with Aricent and you can read more about this release on their blog here.

In my blog 6 months ago, I announced the release of SNAPS-OpenStack and SNAPS-Boot, and I highlighted Kubernetes as a future development area. As with the SNAPS-OpenStack release, we’re making this installer available while it's still early in the development cycle. We welcome contributions and feedback from anyone to help make this an easy-to-use installer for a pure open source and freely available environment. We’re also releasing the support for the Queens release of OpenStack—the latest OpenStack release.

Member Impact

The use of cloud-native technologies, including Kubernetes, should provide for even lower overhead and an even better-performing network virtualization layer than existing virtual machine (VM)-based solutions. It should also improve total cost of ownership (TCO) and quality of experience for end users. A few operators have started to evaluate Kubernetes, and we hope with SNAPS-Kubernetes that even more members will be able to begin this journey.

Our initial total cost of ownership (TCO) analysis with a virtual Converged Cable Access Platform (CCAP) core distributed access architecture (DAA) and Remote PHY technology has shown the following improvements:

- Approximately 89% savings in OpEx costs (power and cooling)

- 16% decrease in rack space footprint

- 1015% increase in throughput

We anticipate that Kubernetes will only increase these numbers.

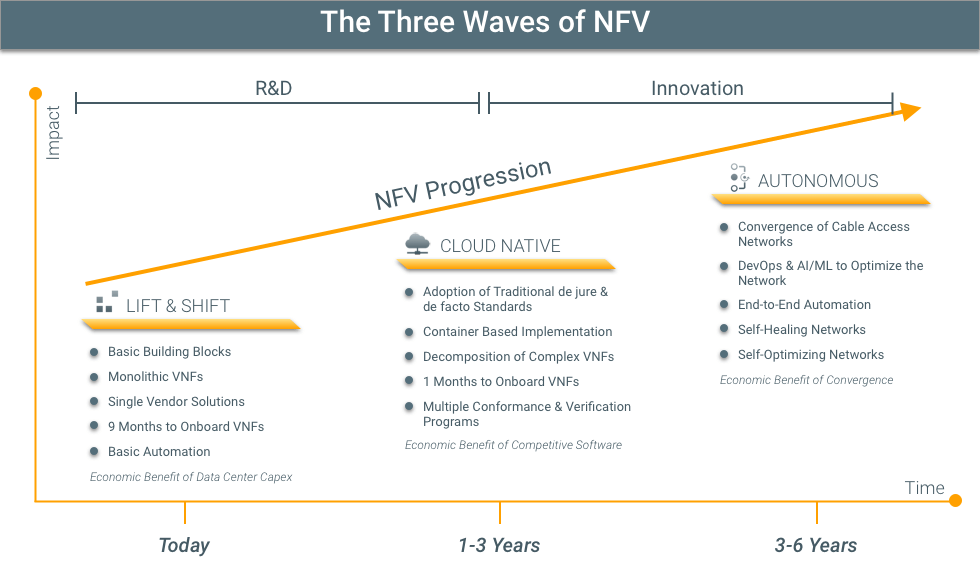

Three Waves of NFV

SNAPS-Kubernetes will help deliver Virtual Network Functions (VNFs) that use fewer resources, are more fault-tolerant and quickly scale to meet demand. This is a part of a movement coined “cloud native.” This the second of the waves of NFV maturity that we are observing.

With the adoption of NFV, we have identified three overarching trends:

- Lift & Shift

- Cloud Native

- Autonomous Networks

Lift & Shift

Service providers and vendors typically support the Lift & Shift model today. These are large VMs running on an OpenStack-type Virtualized Infrastructure Manager (VIM). This is a mature technology, and many of the gaps in this area have closed.

VNF vendors often brag that their VNF solution runs the same version of software that runs on their appliances in this space. Although achieving feature parity with their existing product line is admirable, these solutions don’t take advantage of the flexibility and versatility that can be achieved by fully leveraging virtualization.

There can be a high degree of separation between the underlying hardware and operating system from the VM. This separation is great for portability, but it comes at a cost. Without some level of hardware awareness, it isn’t possible to take full advantage of acceleration capabilities. An extra layer of indirection is included, which can add latency.

Cloud Native

Containers and Kubernetes excel in this quickly evolving section of the market. These solutions aren’t yet as mature as OpenStack and other virtualization solutions, but they are lighter weight and integrate software and infrastructure management. This means that Kubernetes will scale and fail over applications, and the software updates are also managed.

Cloud native is well suited for edge and customer-premises solutions where compute resources are limited by space and power.

Autonomous Networks

Autonomous networks are the desired future in which every element of the network is automated. High-resolution data is being evaluated to continually optimize the network for current and projected conditions. The 3–6-year projection for this technology is probably a bit optimistic, but we need to start implementing monitoring and automation tools in preparation for this shift.

Features

This release is based on Kubernetes 1.10. We will update Kubernetes as new releases stabilize and we have time to validate these releases. As with SNAPS-OpenStack, we believe it’s important to adopt the latest stable releases for lab and evaluation work. Doing so will prepare you for future features that help you get the most out of your infrastructure.

This initial release supports Docker containers. Docker is one of the most popular types of containers, and we want to take advantage of the rich ecosystem of build and management tools. If we later find other container technologies that are better suited to specific cable use cases, this support may change in future releases.

Because Kubernetes and containers are so lightweight, you can run SNAPS-Kubernetes on an existing virtual platform. Our Continuous Integration (CI) scripts use SNAPS-OO to completely automate the installation on almost any OpenStack platform. This should work with most OpenStack versions from Liberty to Queens.

SNAPS-Kubernetes supports the following six solutions for cluster-wide networking:

- Weave

- Flannel

- Calico

- Macvlan

- Single Root I/O Virtualization (SRIOV)

- Dynamic Host Configuration Protocol (DHCP)

Weave, Calico and Flannel provide cluster-wide networking and can be used as the default networking solution for the cluster. Macvlan and SRIOV, however, are specific to individual nodes and are installed only on specified nodes.

SNAPS-Kubernetes uses Container Network Interface (CNI) plug-ins to orchestrate these networking solutions.

Next Steps

As we highlighted before, serverless infrastructure and orchestration continue to be future areas of interest and research. In addition to extending the scope of our infrastructure, we are focusing on using and refining the tools.

Multiple CMTS vendors have announced and demonstrated virtual CCAP cores, so this will be an important workload for our members.

Try It Today

Like other SNAPS releases, SNAPS-Kubernetes is available on GitHub under the Apache Version 2 license. SNAPS-Kubernetes follows the same installation process as SNAPS-OpenStack. The servers are prepared with SNAPS-Boot, and then SNAPS-Kubernetes is installed.

Have Questions? We’d Love to Hear from You

- Reach out on IRC: Server: Freenode Channel #cablelabs-snaps

- Contribute to the documentation, backlog and code on GitHub

- Send an email message directly to snaps@cablelabs.com

- Tweet to @RandyLevensalor

Subscribe to our blog to learn more about SNAPS in the future.

Virtualization

CableLabs Announces SNAPS-Boot and SNAPS-OpenStack Installers

After living and breathing open source since experimenting in high school, there is nothing as sweet as sharing your latest project with the world! Today, CableLabs is thrilled to announce the extension of our SNAPS-OO initiative with two new projects: SNAPS-Boot and SNAPS-OpenStack installers. SNAPS-Boot and SNAPS-OpenStack are based on requirements generated by CableLabs to meet our member needs and drive interoperability. The software was developed by CableLabs and Aricent.

SNAPS-Boot

SNAPS-Boot will prepare your servers for OpenStack. With a single command, you can install Linux on your servers and prepare them for your OpenStack installation using IPMI, PXE and other standard technologies to automate the installation.

SNAPS-OpenStack

The SNAPS-OpenStack installer will bring up OpenStack on your running servers. We are using a containerized version of the OpenStack software. SNAPS-OpenStack is based on the OpenStack Pike release, as this is the most recent stable release of OpenStack. You can find an updated version of the platform that we used for the virtual CCAP core and mobile convergence demo here.

How you can participate:

We encourage you to go to GitHub and try for yourself:

Why SNAPS?

SNAPS (SDN & NFV Application Platform and Stack) is the overarching program to provide the foundation for virtualization projects and deployment leveraging SDN and NFV. CableLabs spearheaded the SNAPS project to fill in gaps in the open source community to ease the adoption of SDN/NFV with our cable members by:

Encouraging interoperability for both traditional and prevailing software-based network services: As cable networks evolve and add more capabilities, SNAPS seeks to organize and unify the industry around distributed architectures and virtualization on a stable open source platform to develop baseline OpenStack and NFV installations and configurations.

Network virtualization requires an open platform. Rather than basing our platform on a vendor-specific version, or being over 6 months behind the latest OpenStack release, we added a lightweight wrapper on top of upstream OpenStack to instantiate virtual network functions (VNFs) in a real-time dynamic way.

Seeding a group of knowledgeable developers that will help build a rich and strong open source community, driving developers to cable: SNAPS is aimed at developers who want to experiment with building apps that require low latency (gaming, virtual reality and augmented reality) at the edge. Developers are able to share information in the open source community on how they optimize their application. This not only helps other app developers, but helps the cable industry understand how to implement SDN/NFV in their networks and gain easy access to these new apps.

At CableLabs, we pursue a “release early” principle to enable contributions to improve and guide the development of new features and encourage others to participate in our projects. This enables us to continuously optimize the software, extend features and improve the ease of use. Our subsidiary, Kyrio, is also handling the integration and testing on the platform at their NFV Interoperability lab.

You can find more information about SNAPS in my previous blog posts “SNAPS-OO is an Open Sourced Collaborative Development” and “NFV for Cable Matures with SNAPS”

Who benefits from SNAPS?

- App Developers will have access to a virtual sandbox that allows them to test how their app will run in a cable scenario, saving them time and money.

- Service providers, vendors and enterprises will be able to build more exciting applications, on a pure open source NFV platform focused on stability and performance, on top of the cable architecture.

How we developed SNAPS:

We leverage containers which have been built and tested by the OpenStack Kolla project. If you are not familiar with Kolla, it is an OpenStack project that maintains a set of Docker containers for many of the OpenStack components. We use these scripts to deploy the containers because the Kolla-Ansible scripts are the most mature and include a broad set of features which can be used in a low latency edge data center. By using containers, we are improving the installation process and updating.

To maximize the usefulness of the SNAPS platform, we included many of the most popular OpenStack projects:

Additional services we included:

Where the future of SNAPS is headed:

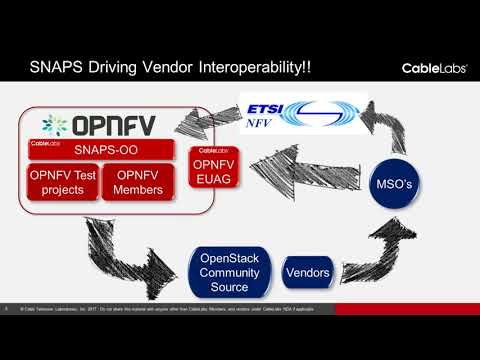

- We plan to continue to make the platform more robust and stable.

- Because of the capabilities we have developed in SNAPS, we have started discussions with the OPNFV Cross Community Continuous Integration (XCI) project to use SNAPS OpenStack as a stable platform for testing test tools and VNFs with a goal to pilot the project in early 2018.

- Aricent is a strong participant in the open source community and has co-created the SNAPS-Boot and SNAPS-OpenStack installer project. Aricent will be one of the first companies to join our open source community contributing code and thought leadership, as well as helping others to create powerful applications that will be valuable to cable.

- As an open source project, we encourage other cable vendors and our members to join the project, contribute code and utilize the open source work products.

There are three general areas where we want to enhance the SNAPS project:

- Integration with NFV orchestrators: We are including the OpenStack NFV orchestrator (Tacker) with this release and we want to extend this to work with other orchestrators in the future.

- Containers and Kubernetes support: We already have some support for Kubernetes running in VMs. We would like to evaluate the benefit of running Kubernetes with or without the benefit/overhead of VMs.

- Serverless computing: We believe that Serverless computing will be a powerful new paradigm that will be important to the cable industry and will be exploring how best to use SNAPS as a Serverless computing platform.

Interactive SNAPS portfolio overview:

Have Questions? We’d love to hear from you

- Reach out on IRC: Server: Freenode Channel #cablelabs-snaps

- Contribute to the documentation, backlog and code on GitHub

- Send an e-mail directly to snaps@cablelabs.com

- Tweet to @RandyLevensalor

Don’t forget to subscribe to our blog to read more about NFV and SNAPS in our upcoming in-depth SNAPS series. Members can join our NFV Workshop February 13-15, 2018. You can find more information about the workshop and the schedule here.

Virtualization

NFV for Cable Matures with SNAPS

SNAPS is improving the quality of open source projects associated with the Network Functions Virtualization (NFV) infrastructure and Virtualization Infrastructure Managers (VIM) that many of our members use today. In my posts, SNAPS is an Open Source Collaborative Development Resource and Snapping Together a Carrier Grade Cloud, I talk about building tools to test the NFV infrastructure. Today, I’m thrilled to announce that we are deploying end-to-end applications on our SNAPS platform.

To demonstrate this technology, we recently held a webinar “Virtualizing the Headend: A SNAPS Proof of Concept” introducing the benefits and challenges of the SNAPS platform. Below, I’ll describe the background and technical details of the webinar. You can skip this information and go straight to the webinar by clicking here.

Background

CableLabs’ SDN/ NFV Application Development Platform and OpenStack project (SNAPS for short) is an initiative that attempts to accelerate the adoption of network virtualization.

Network virtualization gives us the ability to simulate a hardware platform in software. All the functionality is separated from the hardware and runs as a “virtual instance.” For example, in software development, a developer can write an application and test it on a virtual network to make sure the application works as expected.

Why is network virtualization so important? It gives us the ability to create, modify, move and terminate functions across the network.

Why SNAPS is unique

- Creates a stable, replicable and cost-effective platform: SNAPS allows operators and vendors to efficiently develop new automation capabilities to meet the growing consumer demand for self-service provisioning. Much like signing up for Netflix, self-service provisioning allows customers to add and change services on their own, as opposed to setting-up a cable box at home.

- Provides transparent API’s for various kinds of infrastructure

- Reduces the complexity of integration testing

- Only uses upstream OpenStack components to ensure the broadest support: SNAPS is open source software which is available directly from the public OpenStack project. This means we do not deviate from the common source.

With SNAPS, we are pushing the limits of open source and commodity hardware because members can run their entire Virtualized Infrastructure Manager (VIM) on the platform. This is important because the VIM is responsible for managing the virtualized infrastructure of a NFV solution.

Webinar: Proof of Concepts

We collaborated with Aricent, Intel and Casa Systems to deploy two proof of concepts that are reviewed in the webinar. We chose these partners because they are leading the charge to create dynamic cable and mobile networks to keep up with world’s increasing hunger for faster, more intelligent networks tailored to meet customers' needs.

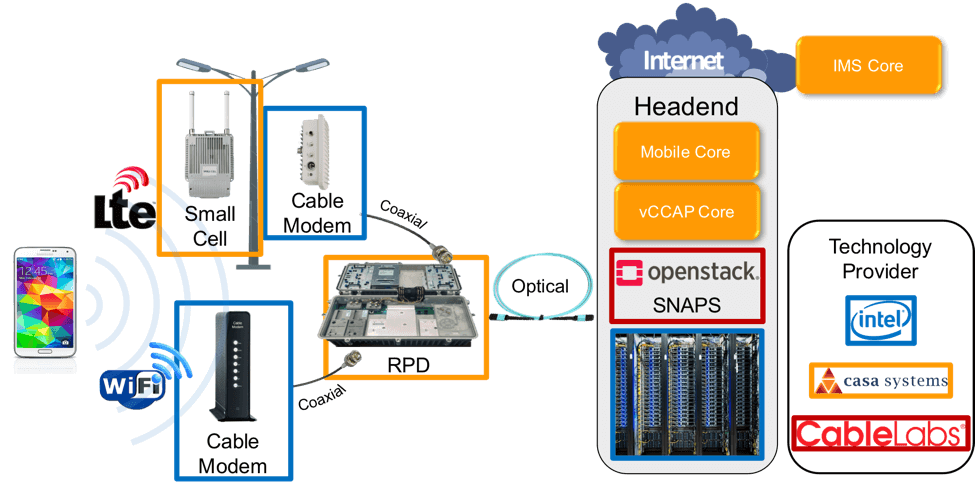

Casa and Intel: Virtual CCAP and Mobile Cores

CableLabs successfully deployed a virtual CCAP (converged cable access platform) core on OpenStack. Eliminating the physical CCAP provides numerous benefits to service providers, including power and cost savings.

Casa and Intel provided hardware and Casa Systems provided the Virtualized Network Function (VNFs) which ran on the SNAPS platform. The virtual CCAP core controls the cable plant and moves every packet to and from the customer sites. You can find more information about CCAP core in Jon Schnoor’s blog post “Remote PHY is Real.”

Advantages of Kyrio’s NFV Interop Lab

For the virtual CCAP demo, the Kyrio NFV Interop Lab provided a collaborative environment for Intel and Casa to leverage the Kyrio lab and staff to build and demonstrate the key building blocks for virtualizing the cable access network.

The Kyrio NFV Interop Lab is unique. It provides an opportunity for developers to test interoperability in a network environment against certified cable access network technology. You can think of the Kyrio lab as a sandbox for engineers to work and build in, enabling:

- Shorter development times

- Operator resources savings

- Faster tests, field trials and live deployments

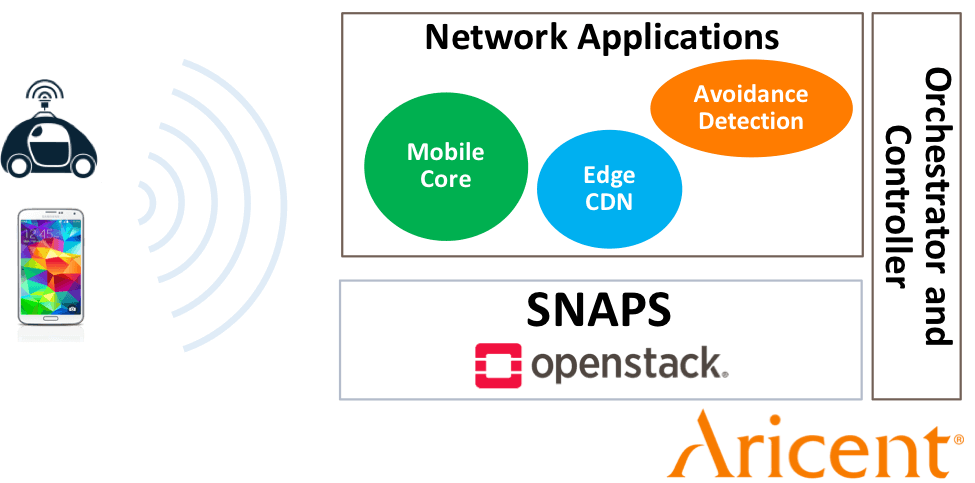

Aricent: Low Latency and Backhaul Optimization

With Aricent we had two different proof of concepts. Both demos highlighted the benefits of having a cloud (or servers) at the service provider edge (less than 100 miles from a customer’s home):

- Low latency: We simulated two smart cars connected to a cellular network. The cars used an application running on a cloud to calculate their speed. If the cloud was too far away, a faster car would rear end a slower car before it was told to slow down. If the cloud was close, the faster car would slow down in time to prevent rear-ending the slower car.

- Bandwidth savings: Saving data that will be used by several people in a closer location can reduce the amount of traffic on the core network. For example, when someone in the same neighborhood watches the same video, they will see a local copy of the video, rather than downloading the original from the other side of the country.

The SNAPS platform continues CableLabs’ tradition of bringing leading technology to the cable industry. The collaborations with Intel, Aricent and Casa Systems were very successful because:

- We demonstrated end-to-end use cases from different vendors on the same version of OpenStack.

- We identified additional core capabilities that should be a part of every VIM. We have already incorporated new features in the SNAPS platform to better support layer 2 networking, including increasing the maximum frame size (or MTU) to comply with the DOCSIS® 3.1 specification.

In addition to evolving these applications, we are interested in collaborating with other developers to demonstrate the SNAPS platform. Please contact Randy Levensalor at r.levensalor@cablelabs.com for more information.

Don’t forget to subscribe to our blog to read more about how we utilize open source to develop quickly, securely, and cost-effectively.

Virtualization

5 Things I Learned at OpenStack Summit Boston 2017

Recently, I attended OpenStack Summit in Boston with more than 5,000 other IT business leaders, cloud operators and developers from around the world. OpenStack is the leading open source software run by enterprises and public cloud providers and is increasingly being used by service providers for their NFV infrastructure. Many of the attendees are operators and vendors who collaboratively develop the platform to meet an ever-expanding set of use cases.

With over 750 sessions, it was impossible to see them all. Here are my top five takeaways and highlights of the event:

1. Edward Snowden's Opinions on Security and Open Source

In the biggest surprise of the event, Edward Snowden, former US NSA employee and self-declared liberator, joined us over a live video feed from an undisclosed location. He talked about the ethics and importance of the open source movement and how open source can be used to improve security and privacy.

Unlike vulnerabilities in proprietary software, those in open source are transparent. As a result, the entire community can learn from these exploits and how to prevent them in the future. Though not mentioned by Snowden, his rhetoric brought to mind the work done to secure OpenSSL after the heart bleed vulnerability was made public. This changed the way that core projects are managed. Snowden mentioned Apple’s iPhone as an example where vulnerabilities were found and the solution was not transparent:

“When Apple or Google has a bug, not only can we have no influence over the cure, but we don’t know anything about the cause and we don’t know what they have learned in effecting a cure. So, it’s not possible for everyone to use that knowledge to help build a better world for everyone.”

His talk brought applause from the audience and was a call to action as much as it was informative.

2. OpenStack is Helping Make the World Safer

The U.S. Army is using OpenStack to rapidly deliver the required curriculum for cyber command training and saving millions of dollars in the process. Using software development as an example, they created an agile development process where the instructors can improve the course rapidly and presented an example of their deployment of different virtual machines with malware and threat detection software. Instructors are able to create new content by submitting code to a source code repository and have it approved in less than a day. The new content is also available to graduates of the course in support of ongoing training. As a taxpayer, I can only hope that the other branches of the military will follow the Army’s lead in delivering the same innovative philosophy and process. These processes employed by the Army can be leveraged by service provides to deliver new services, apply security patches, and remedy service disruptions.

You can watch the keynote here and the in-depth talk below:

3. Lightweight OpenStack Control Planes for Edge Computing

OpenStack was designed to run large clouds managing thousands of servers in traditional data centers. Running OpenStack on a single local server allows service and OTT providers to manage CPEs using the same toolchain for managing VMs in their hosted cloud solutions.

Verizon’s keynote highlighting their uCPE is available here.

4. Aligning Container and Virtual Machine Technologies

My favorite forum session was a discussion to align VMs and containers. Containers address the application configuration and management challenges that are not as easily addressed with virtual machines. OpenStack can be used to manage the dependencies that containers need to run. In addition to the general summit proceeding, OpenStack has a forum format. You can learn more about the format here.

Leaders from both the OpenStack Nova team and the Linux Foundation’s Kubernetes were on the panel. Kubernetes performs many complementary and some overlapping tasks as OpenStack. Because Kubernetes was developed several years after Nova, they improved on some of the similar features.

CableLabs hosted an OpenStack Users Group meeting recently on the same subject called "OpenStack & Containers: Better Together".

5. Data Plane Acceleration

With the growth of OpenStack in the service provider space, the focus to move packets from point A to point B is as critical as ever. Open vSwitch continues to be a popular choice, and with the addition of DPDK support, they are reducing the latency involved with process packets in a virtualized network. Tapio Tallgren, the chair of OPNFV’s Technical Steering Committee, provides some results of testing DPDK with OPNFV. As many of you may know, CableLabs SNAPS project leverages OPNFV as a foundation. The Yardstick performance testing project, which Tapio discusses in his blog post Snaps-OO Open Sourced Collaborative Development Resource, is in the process of migrating many of their scenarios to leverage our SNAPS-OO utility.

FD.io is the newest player for accelerating the data plane. Their testing results in the lab are remarkable, and we are beginning to see some adoption for use in production. There was even a 1-day training session dedicated solely to FD.io.

With demos, product launches, and informative talks, OpenStack Summit Boston 2017 was a huge success. I hope to see you at the next one! If you have any questions about OpenStack don’t hesitate to leave a comment below.