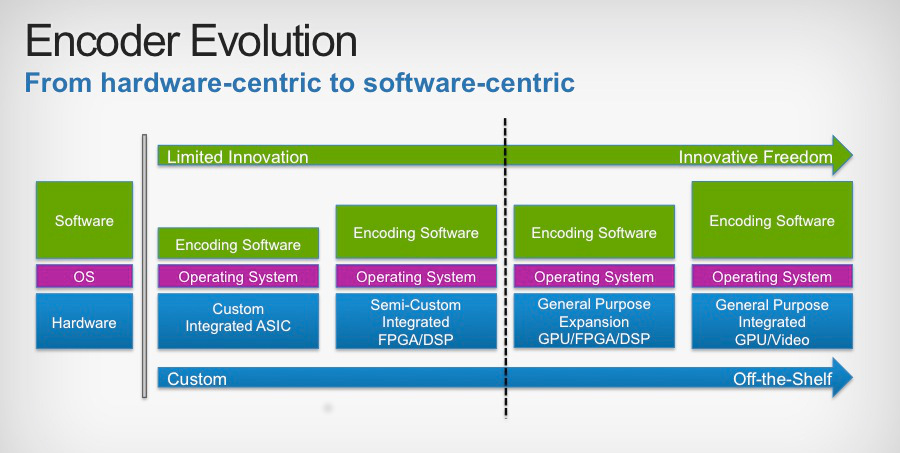

Up until a few years ago, the encoder/transcoder market was dominated by systems based on ASIC (Application Specific Integrated Circuit) architectures that provided excellent performance with minimal power consumption. These audio/video compression workhorses have allowed cable operators to provide more and more high-quality video to their customers in an increasingly bandwidth-constrained head end environment. Fast-forward to today, and what you will see is a dizzying array of encoder vendors with solutions running on a wide variety of platforms. This technological evolution provides both opportunity and a source of apprehension for decision makers trying to determine which vendor solution fits best for the current and future needs of their video delivery organizations.

ASIC encoder platforms are the tried-and-true staples of the encoder industry. Video compression involves the execution of numerous repetitive mathematical calculations for each and every frame of the video. Once your compression algorithms have been proven and hardened, laying them down on silicon is the fastest and most energy-efficient way to get bits in one end and produce far fewer bits out the other. But what happens when you come up with an improvement to your algorithm? The path from design to production at scale can be on the order of 6 months or more. And what about the opportunity to choose between one algorithm or another depending on the characteristics of a particular frame of video? Space on an integrated circuit is high value real estate and it can often be cost-prohibitive to embed logic that will only be used part of the time during execution. With established, and in the eyes of some, aging compression standards such as MPEG-2/H.262, there are fewer opportunities for algorithmic improvements. Most of the innovations within the bounds of that specification have already been realized and the risk to embedding those solutions is minimal. However, more recent compression standards such as AVC/H.264 and HEVC/H.265 are still providing compression engineers with ample room to innovate.

With this in mind, encoder vendors are looking towards solutions that give them a little more freedom to improve their solutions without requiring a release of a brand new hardware platform. Programmable hardware such as FPGAs (Field Programmable Gate Arrays) and GPUs (Graphics Processing Units) are an increasingly popular choice in encoder solutions. These chips allow you the performance benefits of running your algorithms in hardware with the added flexibility of being able to tweak and improve your solutions with a simple software upgrade. It still requires some pretty specialized talent to be able to program these devices to their maximum potential, but the advent of modern hardware-specific languages, such as OpenCL, has opened the doors for quicker and more accessible innovations using these silicon-based platforms.

Only in the last year or so has the next evolution in encoder technology really been enabled. Moore’s Law continues to hold true and recent advancements in general-purpose computing CPUs have made it possible for encoding solutions to be delivered in pure software. Couple that with the continued integration of GPU functionality on-die and you have the ability to deploy a powerful video encoding solution running on the same hardware that will power the data-centers of tomorrow.

One caveat of moving to general-purpose hardware is related to power consumption. Most likely, these platforms will not be able to compete with ASIC-based systems when it comes to energy usage, but the algorithmic flexibility of software-based encoders, coupled with the cost benefits of running on mass-produced hardware may outweigh the cost to power it. Another aspect of this evolution is the incredible sophistication of open source encoding libraries such as x264. With the innovative power of the community behind the development of these codecs, it is possible that operators might find some use for them in certain areas of their production environments.

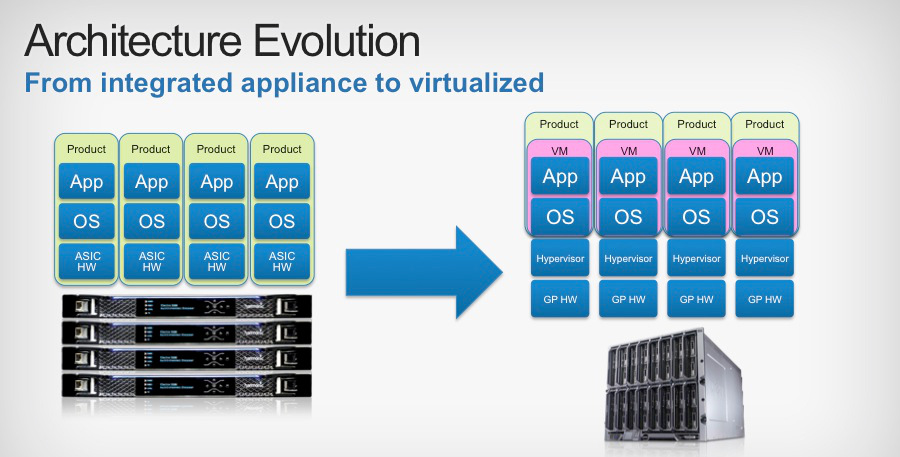

Finally, one of the most recent trends in video compression solutions is not related to codec technology itself, but in the virtualization of encoder/transcoder platforms. Virtualization is not new to the computing industry, but its introduction into video workflows is definitely a novel, if not expected, approach. The idea of dynamically associating hardware resources to encoding processes according to demand, complexity, or other business needs enables new levels of flexibility in meeting the large-scale encoding needs of today’s cable operators, both large and small. Couple that with the perks of being able to integrate these general purpose computing platforms into your existing, IT-managed datacenter operations and you get significant opportunity for cost savings in your backend video infrastructure deployments.

It is an exciting time in the encoder field, with drastic changes in deployment architectures sweeping through the industry. We here at CableLabs continue to apply solid science in our evaluations of these platforms to help our members make the most informed decisions possible. Projects such as our recent MPEG2 encoder evaluation and our upcoming AVC/H.264 encoder shoot-out apply stringent testing methodologies to identify the absolute best products available on the market today.

Greg Rutz is a Lead Architect at CableLabs working on several projects related to digital video encoding/transcoding and digital rights management for online video.