Security

Cybersecurity Awareness Month and Beyond: How We’re Safeguarding Network Integrity

In the digital age, cybersecurity is the first line of defense against an ever-expanding and continually evolving array of threats. The increasing sophistication of cyber threats and a deepening dependence on interconnectivity have elevated cybersecurity technologies from a peripheral consideration to a critical priority.

October is Cybersecurity Awareness Month, but safeguarding digital integrity is a year-round commitment for CableLabs. In our Security Lab, we work to identify and mitigate threats to the access network. We proactively develop innovative technologies that make it easier for internet users to protect their digital lives.

Let’s take a look at some of the CableLabs technologies that are enhancing network security and reshaping the way we protect ourselves online.

DOCSIS 4.0 Security

The new DOCSIS® 4.0 protocol is another promising chapter in the successful life of hybrid fiber coax (HFC) networks, and it brings with it notable security enhancements to the broadband community.

It’s important to note that DOCSIS 4.0 cable modems (CMs) are compatible with existing DOCSIS 3.1 networks. This allows the CMs to take advantage of higher speed tiers even without needing to upgrade the network at the same time. To fully leverage the new upstream bandwidth efficiency and security features of the protocol, both modems and cable modem termination systems (CMTSs) need to support DOCSIS 4.0 technology.

Another key security-enhancing element of the technology is that DOCSIS 4.0 networks come with upgradable security. The technology continues to support the Baseline Privacy protocol (BPI+ V1) used in DOCSIS 3.1 specifications. It also integrates the new version that can be enabled as needed (BPI+ V2).

The new version introduces mutual authentication between devices and the network, eliminates the dependency on the Rivest Shamir Adleman (RSA) algorithm and implements modern key exchange mechanisms. This change enhances device authentications with Perfect Forward Secrecy and cryptographic agility and aligns DOCSIS key exchange mechanisms with the latest Transport Layer Security (TLS) protocol, v1.3.

Further upgrades include enhanced revocation-checking capabilities with support for both Online Certificate Status Protocol (OCSP) and Certificate Revocation List (CRL) in DOCSIS 4.0 certificates. DOCSIS 4.0 also introduces standardized interfaces for managing edge device access (SSH) aimed at limiting the exposure of corporate secrets (e.g., technicians’ passwords) and incorporates a Trust on First Use (TOFU) approach for downgrade protection across BPI+ versions.

Ultimately, the new DOCSIS 4.0 security is designed to provide several options for network risk management. These features include new speeds and capabilities that can be utilized alongside today’s security properties and procedures (e.g., BPI+V1 with DOCSIS 3.1 or DOCSIS 4.0 CMTSs) and advanced protections when needed.

Matter Device Onboarding

Passwords are meant to be secret, so why are users sharing them with all of their Internet of Things (IoT) devices? At CableLabs, we’re working to make it easy for end-users to add devices to their home networks without needing to share a password with every device.

Because so many devices are communicating with one another, standardization is critical — especially when it comes to security. That’s where Matter comes in. The open-source connectivity standard is designed to enable seamless and secure connectivity among the devices in users’ smart home platforms.

Our vision is for each device to have its own credential to get on the Wi-Fi network. The access point (AP) would use this unique credential to grant the device access to the network, and the device then would verify the AP’s credential. This has three incredibly significant advantages for subscribers:

1. It vastly increases the security of the home network. This is because a compromised device cannot divulge a global network password and lead to a compromise of the entire network.

2. It’s possible to leverage the device attestation certificate that comes with every Matter device to inform the network that it’s a verified and certified device.

3. There's no need to reset every single device on the network if the Wi-Fi password is changed.

Join us for a demonstration of Matter at SCTE® Cable-Tec Expo®, which is October 17–19 in Denver, Colorado. Come see us in CableLabs’ booth 2201 to see the future of networked IoT devices and how scanning a QR code can get a device on a network with its own unique credential.

CableLabs Custom Connectivity for MDUs

One of the fastest-growing market segments for broadband providers worldwide is the multi-dwelling unit (MDU) segment. The opportunities here include fast-growing apartment communities, as well as segments such as emergency/temporary housing, low-cost housing, the hospitality and short-term rental markets, and even emergency services.

A common theme across these is the need for an alternate deployment model that allows on-demand service activation and life-cycle management, as well as custom connectivity to various devices. The traditional deployment model of installing customer premises equipment (CPE) on a per-subscriber and/or per-unit basis has hindered operators in delivering services to these segments in a cost-effective manner.

The CableLabs Custom Connectivity architecture is designed to address these constraints by providing dynamic, on-demand subscription activation and device-level management to consumers across the operator’s footprint — without the need to deploy a CPE. The architecture leverages the security controls and mechanisms designed within the CableLabs Micronets technology to provide dynamic, micro-segmentation-based subscription delivery where a subscriber’s devices can connect to their “home subscription” from anywhere on the network and across different access technologies (Wi-Fi, cellular, etc.).

Additionally, it provides consistent operational interfaces for device authentication and service provisioning, as well as billing and subscription management interfaces to enable on-the-fly subscription activation and management.

Safer Networks, Empowered Users

The importance of proactive cybersecurity measures can’t be overstated, and these cutting-edge technologies are proof of CableLabs’ ongoing commitment to enhancing network security. These innovations not only make our networks safer, but they also empower users to take charge of their own online security.

By staying at the forefront of cybersecurity advancements, CableLabs continues to ensure we can all navigate the digital world with greater confidence and peace of mind.

Security

Practical Considerations for Post-Quantum Cryptography Deployment

It’s the year 2031, and the pandemic is in the past. While Dave drinks his morning coffee and reads the news, a headline catches his attention. A large quantum computer is finally operational! Suddenly, Dave’s mind is racing. After few seconds, as his heartbeat slows, he looks up into the mirror and proudly says, “Yes, we’re ready.” What you don’t know about Dave is that he’s been working for the past 10 years to make sure that all aspects of our broadband communications and access networks remain secure and protected. Besides searching for new quantum-resistant algorithms, Dave has been focusing on the practical aspects of their deployment and addressing their impact on the broadband industry.

Here in 2021, the broadband industry needs to start traveling the same path that Dave will have navigated 10 years from now. We need to make sure we remove the roadblocks ahead of time so that we can lay the groundwork for the adoption of new security tools like post-quantum (PQ) cryptography.

The Post-Quantum Cryptography Landscape

Although NIST is still finalizing its standardization process for PQ cryptography, there are interesting trends and practical long-term considerations for PQ deployment and the broadband industry that we can already infer.

Most of the algorithms that are still present in the final round of the algorithm competition are based on mathematical constructs called lattices, which, in practice, are collections of equally spaced vectors or points. Lattice-based cryptography security properties are rooted in the difficulty of solving certain topological problems for which there is not an efficient algorithm (even for a quantum computer), such as the Shortest Vector Problem (SVP) or the Closest Vector Problem (CVP). Algorithms like Falcon or Dilithium are based on lattices and produce the smallest authentication traces overall (i.e., signatures range from 700 bytes to 3,300 bytes).

Another class of algorithms to keep an eye on is based on isogenies. These algorithms use a different structure than lattices and have been proposed for key exchange algorithms. These new key-exchange algorithms—namely Key Encapsulation Mechanism (KEM)—leverage morphisms (or isogenies) among elliptic curves to provide “Diffie-Hellman–like” key exchange properties to implement Perfect Forward Secrecy. Isogeny-based encryption uses the shortest keys in the PQ algorithm landscape but is computationally very heavy.

Besides these two classes of algorithms, we should keep hash-based signature schemes in mind as a possible alternative. Specifically, they provide proven security at the expense of very large cryptographic signatures (public keys are extremely small) that hinder, at the moment, their adoption. A well-known hash-based algorithm that will probably be re-included in the NIST standardization process is SPHINCS+.

DOCSIS® Protocol, DOCSIS PKI and PQ Deployment

Now that you understand the available options to consider for your next-generation crypto infrastructure, it’s time to look at how these new algorithms impact the broadband environment. In fact, although the DOCSIS protocol has been using digital certificates and public-key cryptography since its inception, the broadband ecosystem relies on the RSA algorithm only—and that algorithm has very different characteristics than the PQ algorithms in consideration today.

The good news is that from a security perspective, minimal upgrades are required to replace the use of RSA using the latest version of the DOCSIS protocol (i.e., DOCSIS 4.0) when compared with previous versions. Specifically, DOCSIS 4.0 removes the dependency on the use of the RSA algorithm in terms of key exchange and leverages a standard signature format—namely, the Cryptographic Message Syntax (CMS)—to deliver signatures. CMS is already scheduled to be upgraded to provide standard support for PQ algorithms as soon as the algorithms standardization process ends. In DOCSIS 1.0–3.1, because of the dependency on the RSA algorithm for key exchange, the required protocol changes might be more extensive and employ the use of symmetric keys, in addition to RSA keys, to deliver secure authentications.

The size of the new algorithms is another important aspect of deployment. Although the lattice-based and isogenies-based algorithms are quite efficient for the sizes of authenticated (signature) or encrypted (key-exchange) data, they’re still an order of magnitude (or more) larger than what we’re used to today.

Therefore, the broadband industry needs to focus a first set of considerations surrounding the impact of cryptography on the size of authentication and authorization messages. In the DOCSIS protocol, the Baseline Privacy Key Management (BPKM) messages are used, at layer 2, to transfer authentication information across the cable modem and its termination system. Fortunately, because BPKM messages can provide support for any data size via fragmentation support, we don’t envision the need to update or modify the structure of Layer 2 authentication messages to accommodate the new size of crypto.

Somewhat connected to the size of the new crypto are the considerations related to algorithm performances. PQ algorithms, unlike RSA and ECDSA, are computationally very heavy and therefore might pose additional engineering hurdles when designing the hardware to support them. For end-entity devices such as cable modems and optical network units, there are various options to consider. One option, for example, is to look at the integration of modern microcontrollers that can offload computation and provide isolated environments in which algorithms can be securely executed. Another approach is to leverage trusted execution environments already available in many edge devices’ central processing units (CPUs), without the need to update today’s hardware architectures. On core devices, the added CPU load—when compared with the very fast RSA verifications—might require additional resources. This is an active area of investigation.

The final set of considerations is related to algorithm deployment models and certificate chain validation considerations. Specifically, because the current implementation paradigm for PQ algorithms required by NIST doesn’t use the hash-and-sign paradigm (it directly signs the data without hashing it first), there are some important considerations to make. Although this approach removes the security dependency on the hashing algorithm, it also introduces a subtle but important performance hit; the data to be authenticated or signed (i.e., when a device is trying to authenticate to the network) must be processed directly by the algorithm. This might require large data buses to carry the data to the MCU or to transition through the trusted execution environment on the CPU. Performance bottlenecks generated by the adopted signing mechanism have already been observed, and further investigations are needed to better understand the real impact over deployments.

For example, when signing with the “hash-and-sign” paradigm, the signing part of the operation on a 1TB document or 1KB document takes the same time (because you’re always signing the hash that’s only a few bytes in length). In comparison, when using the new paradigm (not possible with algorithms like RSA), signing times can differ wildly depending on the size of the data you’re signing. This problem is even more evident when addressing the costs associated with the generation and signing of hundreds of millions of certificates via this new approach. In other words, the new paradigm, if adopted, could potentially impact certificate providers and increase the costs associated with the signing of large quantities of certificates.

Available Tools and Projects

Now that you know where and what to look for, how can you start learning more about—and experimenting with—these new algorithms for real-world deployment?

One of the best places to start is the Open Quantum Safe (OQS) project that aims to support the development and prototyping of quantum-resistant cryptography. The OQS project provides two main repositories (open-source and available on GitHub): the base liboqs library, which provides a C implementation of quantum-resistant cryptographic algorithms, and a fork of the OpenSSL library that integrates liboqs and provides a prototype implementation of CableLabs’ Composite Crypto technology.

Although the OQS project is a great tool to start working with these new algorithms, the provided integration with OpenSSL doesn’t support generic signing operations: a limitation that might affect the possibility to test the new algorithms in different use-cases. To address these limitations and to provide better Composite Crypto support together with an hash-and-sign implementation for PQ algorithms, CableLabs started the integration of the PQ-enabled OpenSSL code with a new PQ-enabled LibPKI (a fork from the original OpenCA’s LibPKI repository) that can be used for building and testing these algorithms for all the aspects of the PKI lifecycle management, from validating the full certificate chain to generating quantum-resistant revocation information (e.g., CRLs and OCSP responses).

Security

A Proposal for a Long-Term Post-Quantum Transitioning Strategy for the Broadband Industry via Composite Crypto and PQPs

The broadband industry has historically relied on public-key cryptography to provide secure and strong authentication across access networks and devices. In our environment, one of the most challenging issues—when it comes to cryptography—is to support devices with different capabilities. Some of these devices may or may not be fully (or even partially) upgradeable. This can be due to software limitations (e.g., firmware or applications cannot be securely updated) or hardware limitations (e.g., crypto accelerators or secure elements).

A Heterogeneous Ecosystem

When researching our transitioning strategy, we realized that—especially for constrained devices—the only option at our disposal was the use of pre-shared keys (PSKs) to allow for post-quantum safe authentications for the various identified use cases.

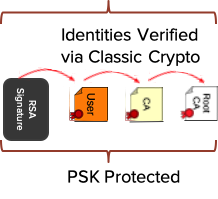

In a nutshell, our proposal combines the use of composite crypto, post-quantum algorithms and timely distributed PSKs to accommodate the coexistence of our main use cases: post-quantum capable devices, post-quantum validation capable devices and classic-only devices. In addition to providing a classification of the various types of devices based on their crypto capabilities to support the transition, we also looked at the use of composite crypto for the next-generation DOCSIS® PKI to allow the delivery of multi-algorithm support for the entire ecosystem: Elliptic Curve Digital Signature Algorithm (ECDSA) as a more efficient alternative to the current RSA algorithm, and a post-quantum algorithm (PQA) for providing long-term quantum-safe authentications. We devised a long-term transitioning strategy for allowing secure authentications in our heterogeneous ecosystem, in which new and old must coexist for a long time.

Three Classes of Devices

The history of broadband networks teaches us that we should expect devices that are deployed in DOCSIS® networks to be very long-lived (i.e., 20 or more years). This translates into the added requirement—for our environment—to identify strategies that allow for the different classes of devices to still perform secure authentications under the quantum threat. To better understand what is needed to protect the different types of devices, we classified them into three distinct categories based on their long-term cryptographic capabilities.

Classic-Only Devices. This class of devices does not provide any crypto-upgrade capability, except for supporting the composite crypto construct. For this class of devices, we envision deploying post-quantum PSKs (PQPs) to devices. These keys are left dormant until the quantum-safe protection is needed for the public-key algorithm.

Specifically, while the identity is still provided via classic signatures and associated certificate chains, the protection against quantum is provided via the pre-deployed PSKs. Various techniques have been identified to make sure these keys are randomly combined and updated while attached to the network: an attacker would be required to have access to the full history of the device traffic to be able to get access to the PSKs. This solution can be deployed today for cable modems and other fielded devices.

Quantum-Validation Capable Devices. This type of device does not provide the possibility to upgrade the secure storage or the private key algorithms, but their crypto libraries can be updated to support selected PQAs and quantum-safe key encapsulation mechanisms (KEMs). Devices with certificates issued under the original RSA infrastructure must still use the deployed PSKs to protect the full authentication chain, whereas devices whose credentials are issued under the new PKI need only protect the link between the signature and the device certificate. For these devices, PSKs can be transferred securely via quantum-resistant KEMs.

Quantum Capable Devices. These devices will have full PQA support (both for authentication and validation) and might support classic algorithms for validation. The use of composite crypto allows for validating the same entities across the quantum-threat hump, especially on the access network side. To validate classic-only devices, the use of Kerberos can address symmetric pairwise PSKs distribution for authentication and encryption.

Composite Crypto Solves a Fundamental Problem

In our proposal for a post-quantum transitioning strategy for the broadband industry, we identified the use of composite crypto and PQPs as the two necessary building blocks for enabling secure authentication for all PKI data (from digital certificates to revocation information).

When composite crypto and PQPs are deployed together, the proposed architecture allows for secure authentication across different classes of devices (i.e., post-quantum and classic), lowers the costs of transitioning to quantum-safe algorithms by requiring the support of a single infrastructure (also required for indirect authentication data like “stapled” OCSP responses), extends the lifetime of classic devices across the quantum hump and does not require protocol changes (even proprietary ones) as the two-certificate solution would require.

Ultimately, the use of composite crypto efficiently solves the fundamental problem of associating both classic and quantum-safe algorithms to a single identity.

To learn more, watch SCTE Tec-Expo 2020’s “Evolving Security Tools: Advances in Identity Management, Crytography & Secure Processing” recording and participate to the KeyFactor’s 2020 Summit.

Security

EAP-CREDS: Enabling Policy-Oriented Credential Management in Access Networks

In our ever-connected world, we want our devices and gadgets to be always available, independently from where or which access networks we are currently using. There’s a wide variety of Internet of Things (IoT) devices out there, and although they differ in myriad ways – power, data collection capabilities, connectivity – we want them all to work seamlessly with our networks. Unfortunately, it can be quite difficult to enjoy our devices without worrying about getting them securely onto our networks (onboarding), providing network credentials (provisioning) and even managing them.

Ideally, the onboarding process should be secure, efficient and flexible enough to meet the needs of various use cases. Because IoT devices typically lack screens and keyboards, provisioning their credentials can be a cumbersome task: Some devices might be capable of using only a username and a password, whereas others might be able to use more complex credentials such as digital certificates or cryptographic tokens. For consumers, secure onboarding should be easy; for enterprises, the process should be automated and flexible so that large numbers of devices can quickly provisioned with unique credentials.

Ideally, at the end of the process, devices should be provisioned with network-and-device specific credentials, directly managed by the network and unique to the device so that compromises impact that specific device on that specific network. In practice, the creation and installation of new credentials is often a very painful process, especially for devices in the lower segment of the market.

It’s Credentials Management, Not Just Onboarding

After a device is successfully “registered” or “onboarded”, the missing piece that has been and continues to be, so far, ignored is how to manage these credentials. Even when devices allow for configuring them, their deployments tend to be “static” and they rarely get updated. There are two reasons for this: The first reason is the lack of security controls, typically on smaller devices, to set these credentials, and the second, and more relevant, reason is that users rarely remember to update authentication settings. According to a recent article, even in corporate environments, “almost half (47%) of CIOs and IT managers have allowed IoT devices onto their corporate network without changing the default passwords” even though another CISO survey has found that “ ... almost half (47%) of CISOs were worried about a potential breach due to their organization’s failure to secure IoT devices in the workplace.”

At CableLabs we look at the problem from many angles. In particular, we focus on how to provide network credentials management that (a) is flexible, (b) can enforce credentials policies across devices and (c) does not require additional discovery mechanisms.

EAP-CREDS: The Right Tool for the Specific Task

The IEEE Port-Based Network Access Control (802.1x) provides the basis for access network architectures to allow entities (e.g., devices, applications) to authenticate to the network even before being granted connectivity. Specifically, the Extensible Authentication Protocol (EAP) provides a communication channel in which various authentication methods can be used to exchange different types of credentials. Once the communication between the client and the server has been secured via a mechanism such as EAP-TLS or EAP-TEAP, our work (EAP-CREDS), uses the “extensible” attribute of EAP to include access network credentials management.

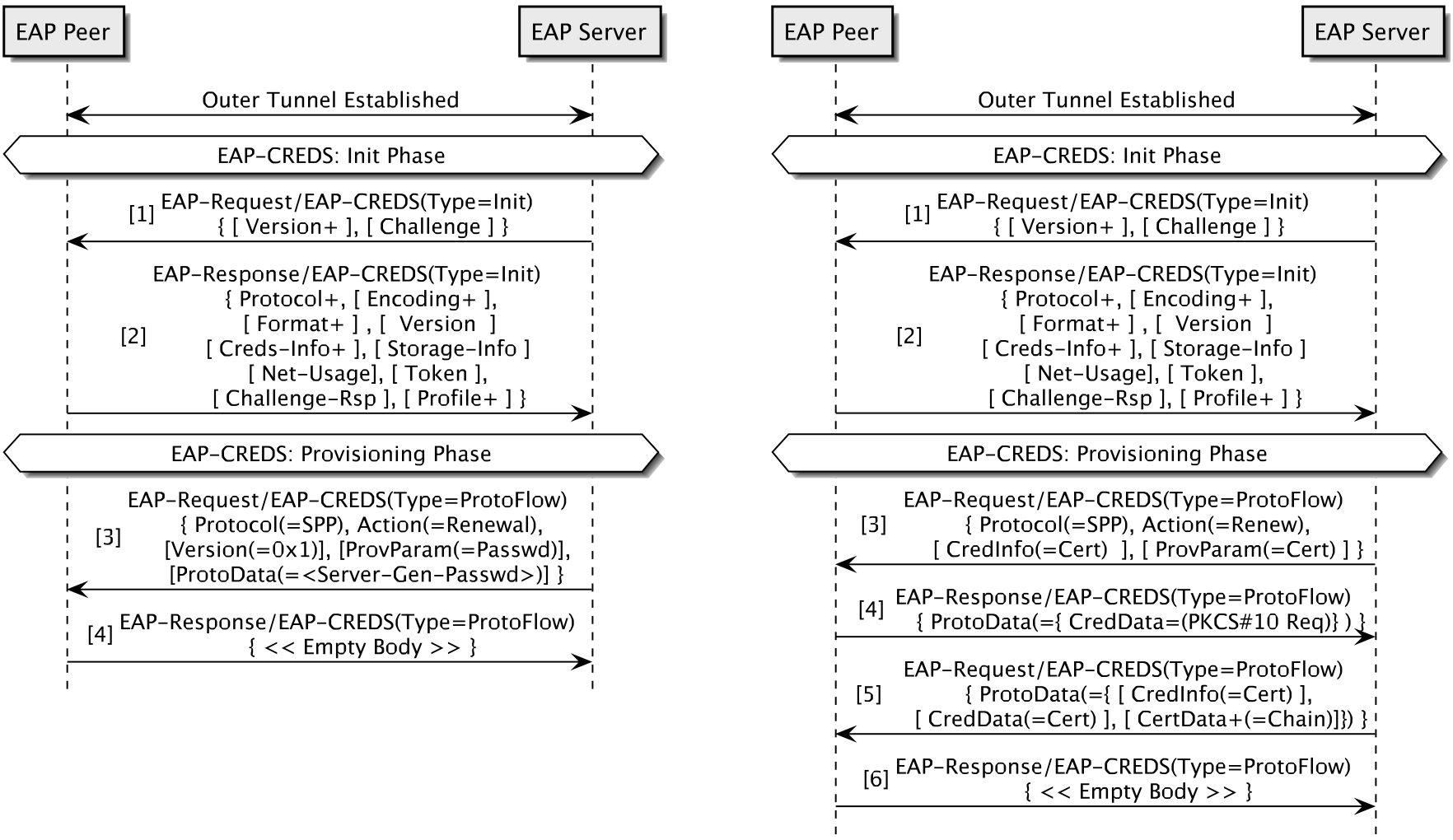

EAP-CREDS implements three separate phases: initialization, provisioning and validation. In the initialization phase, the EAP server asks the device to list all credentials available on the device (for the current network only) and, if needed, initiates the provisioning phase during which a credentials provisioning or renewal protocol supported by both parties is executed. After that phase is complete, the server may initiate the validation phase (to check that the credentials have been successfully received and installed on the device) or declare success and terminate the EAP session.

To keep the protocol simple, EAP-CREDS comes with specific requirements for its deployment:

- EAP-CREDS cannot be used as a stand-alone method. It’s required that EAP-CREDS is used as an inner method of any tunneling mechanism that provides secrecy (encryption), server-side authentication and, for devices that already have a set of valid credentials, client-side authentication.

- EAP-CREDS doesn’t mandate for (or provide) a specific protocol for provisioning or managing the device credentials because it’s meant only to provide EAP messages for encapsulating existing (standard or vendor-specific) protocols. In its first versions, however, EAP-CREDS also incorporated a Simple Provisioning Protocol (SPP) that supported username/password and X.509 certificate management (server-side driven). The SPP has been extracted from the original EAP-CREDS proposal and will be standardized as a separate protocol.

Figure 2 - Two EAP-CREDS sessions. On the left (Figure 2a), the server provisions a server-generated password (4 msgs). On the right (Figure 2b), the client renews its certificate by generating a PKCS#10 request; the server replies with the newly issued certificate (6 msgs).

When these two requirements are met, EAP-CREDS can manage virtually any type of credentials supported by the device and the server. An example of early adoption of the EAP-CREDS mechanism can be found in the Release 3 of the CBRS Alliance specifications where EAP-CREDS is used to manage non-USIM based credentials (e.g., username/password or X.509 certificates) for authenticating end-user devices (e.g., cell phones). Specifically, CBRS-A uses EAP-CREDS to transport the provisioning messages from the SPP to manage username/password combinations as well as X.509 certificates. The combination of EAP-CREDS and SPP provides an efficient way to manage network credentials.

SPP and EAP-CREDS: Flexibility and Efficiency

To understand the specific type of messages implemented in EAP-CREDS and SPP, let’s look at Figure 2a which shows a typical exchange between an already registered IoT device and a business network.

In this case, after successfully authenticating both sides of the communication, the server initiates EAP-CREDS and uses SPP to deliver a new password. The total number of messages exchanged in this case is between four (when server-side generation is used) and six (when co-generation between client and server is used). Figure 2b provides the same use-case for X.509 certificates where co-generation is used.

One of the interesting characteristics of EAP-CREDS and SPP is their flexibility and ability to easily accommodate solutions that, today, need to go through more complex processes (e.g., OSU registration). For instance, SPP can also be used to register existing credentials in two ways. Besides using an authorization token during the initialization phase (i.e., any kind of unique identifier, whether a signed token or a device certificate), devices can also register their existing credentials (e.g., their device certificate) for network authentication.

Policy-Based Credentials Management

As we’ve seen, EAP-CREDS delivers an automatic, policy-driven, cross-device credentials-management system and its use can improve the security of different types of access networks: industrial, business and home.

For the business and industrial environments, EAP-CREDS provides a cross-vendor standard way to automate credentials management for large number of devices,(not just IoT) thus making sure that (a) no default credentials are used, (b) that the ones (credentials) that are used are regularly updated and (c) credentials aren’t shared with other (possibly less secure) home environments. For the home environment, EAP-CREDS provides the possibility to make sure that the small IoT devices we’re buying today aren’t easily compromised because of weak and static credentials and provides a complementary tool (for 802.1x-enabled networks only) to consumer-oriented solutions like the Wi-Fi Alliance’s DPP.

If you’re interested in further details about EAP-CREDS and credentials management, please feel free to contact us and start something new today!

10G

10G Integrity: The DOCSIS® 4.0 Specification and Its New Authentication and Authorization Framework

One of the pillars of the 10G platform is security. Simplicity, integrity, confidentiality and availability are all different aspects of Cable’s 10G security platform. In this work, we want to talk about the integrity (authentication) enhancements, that have been developing for the next generation of DOCSIS® networks, and how they update the security profiles of cable broadband services.

DOCSIS (Data Over Cable Service Interface Specifications) defines how networks and devices are created to provide broadband for the cable industry and its customers. Specifically, DOCSIS comprises a set of technical documents that are at the core of the cable broadband services. CableLabs manufacturers for the cable industry, and cable broadband operators continuously collaborate to improve their efficiency, reliability and security.

With regards to security, DOCSIS networks have pioneered the use of public key cryptography on a mass scale – the DOCSIS Public Key Infrastructure (PKIs) are among the largest PKIs in the world with half billion active certificates issued and actively used every day around the world.

Following, we introduce a brief history of DOCSIS security and look into the limitations of the current authorization framework and subsequently provide a description of the security properties introduced with the new version of the authorization (and authentication) framework which addresses current limitations.

A Journey Through DOCSIS Security

The DOCSIS protocol, which is used in cable’s network to provide connectivity and services to users, has undergone a series of security-related updates in its latest version DOCSIS 4.0, to help meet the 10G platform requirements.

In the first DOCSIS 1.0 specification, the radio frequency (RF) interface included three security specifications: Security System, Removable Security Module and Baseline Privacy Interface. Combined, the Security System plus the Removable Security Module Specification became Full Security (FS).

Soon after the adoption of public key cryptography that occurred in the authorization process, the cable industry realized that a secure way to authenticate devices was needed; a DOCSIS PKI was established for DOCSIS 1.1-3.0 devices to provide cable modems with verifiable identities.

With the DOCSIS 3.0 specification, the major security feature was the ability to perform the authentication and encryption earlier in the device registration process, thus providing protection for important configuration and setup data (e.g., the configuration file for the CM or the DHCP traffic) that was otherwise not protected. The new feature was called Early Authorization and Encryption (EAE), it allows to start Baseline Privacy Interface Plus (BPI) even before the device is provisioned with IP connectivity.

The DOCSIS 3.1 specifications created a new Public Key Infrastructure *(PKI) to handle the authentication needs for the new class of devices. This new PKI introduced several improvements over the original PKI when it comes to cryptography – a newer set of algorithms and increased key sizes were the major changes over the legacy PKI. The same new PKI that is used today to secure DOCSIS 3.1 devices will also provide the certificates for the newer DOCSIS 4.0 ones.

The DOCSIS 4.0 version of the specification introduces, among the numerous innovations, an improved authentication framework (BPI Plus V2) that addresses the current limitations of BPI Plus and implements new security properties such as full algorithm agility, Perfect Forward Secrecy (PFS), Mutual Message Authentication (MMA or MA) and Downgrade Attacks Protection.

Baseline Privacy Plus V1 and Its Limitations

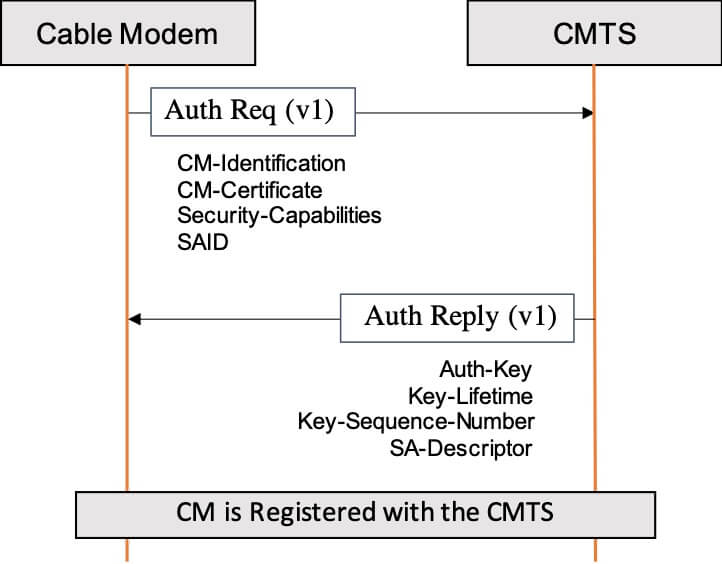

In DOCSIS 1.0-3.1 specifications, when Baseline Privacy Plus (BPI+ V1) is enabled, the CMTS directly authorizes a CM by providing it with an Authorization Key, which is then used to derive all the authorization and encryption key material. These secrets are then used to secure the communication between the CM and the CMTS. In this security model, the CMTS is assumed trusted and its identity is not validated.

Figure 1: BPI Plus Authorization Exchange

The design of BPI+ V1 dates back more than just few years and in this period of time, the security and cryptography landscapes have drastically changed; especially in regards to cryptography. At the time when BPI+ was designed, the crypto community was set on the use of the RSA public key algorithm, while today, the use of elliptic-curve cryptography and ECDSA signing algorithm is predominant because of its efficiency, especially when RSA 3072 or larger keys are required.

A missing feature in BPI+ is the lack of authentication for the authorization messages. In particular, CMs and CMTS-es are not required to authenticate (i.e., sign) their own messages, making them vulnerable to unauthorized manipulation.

In recent years, there has been a lot of discussion around authentication and how to make sure that compromises of long-term credentials (e.g., the private key associated with an X.509 certificate) do not provide access to all the sessions from that user in the clear (i.e., enables the decryption of all recorded sessions by breaking a single key) – because BPI+ V1 directly encrypts the Authorization Key by using the RSA public key that is in the CM’s device certificate, it does not support Perfect Forward Secrecy.

To address these issues, the cable industry worked on a new version of its authorization protocol, namely BPI Plus Version 2. With this update, a protection mechanism was required to prevent downgrade attacks, where attackers to force the use of the older, and possibly weaker, version of the protocol. In order to address this possible issue, the DOCSIS community decided that a specific protection mechanism was needed and introduced the Trust On First Use (TOFU) mechanism to address it.

The New Baseline Privacy Plus V2

The DOCSIS 4.0 specification introduces a new version of the authentication framework, namely Baseline Privacy Plus Version 2, that addresses the limitations of BPI+ V1 by providing support for the identified new security needs. Following is a summary of the new security properties provided by BPI+ V2 and how they address the current limitations:

- Message Authentication. BPI+ V2 Authorization messages are fully authenticated. For CMs this means that they need to digitally sign the Authorization Requests messages, thus eliminating the possibility for an attacker to substitute the CM certificate with another one. For CMTS-es, BPI+ V2 requires them to authenticate their own Authorization Reply messages this change adds an explicit authentication step to the current authorization mechanism. While recognizing the need for deploying mutual message authentication, DOCSIS 4.0 specification allows for a transitioning period where devices are still allowed to use BPI+ V1. The main reason for this choice is related to the new requirements imposed on DOCSIS networks that are now required to procure and renew their DOCSIS credentials when enabling BPI+ V2 (Mutual Authentication).

- Perfect Forward Secrecy. Differently from BPI+ V1, the new authentication framework requires both parties to participate in the derivation of the Authorization Key from authenticated public parameters. In particular, the introduction of Message Authentication on both sides of the communication (i.e., the CM and the CMTS) enables BPI+ V2 to use the Elliptic-Curves Diffie-Hellman Ephemeral (ECDHE) algorithm instead of the CMTS directly generating and encrypting the key for the different CMs.Because of the authentication on the Authorization messages, the use of ECDHE is safe against MITM attacks.

- Algorithm Agility. As the advancement in classical and quantum computing provides users with incredible computational power at their fingertips, it also provides the same ever-increasing capabilities to malicious users. BPI+ V2 removes the protocol dependencies on specific public-key algorithms that are present in BPI+ V1. , By introducing the use of the standard CMS format for message authentication (i.e., signatures) combined with the use of ECDHE, DOCSIS 4.0 security protocol effectively decouples the public key algorithm used in the X.509 certificates from the key exchange algorithm. This enables the use of new public key algorithms when needed for security or operational needs.

- Downgrade Attacks Protection. A new Trust On First Use (TOFU) mechanism is introduced to provide protection against downgrade attacks – although the principles behind TOFU mechanisms are not new, its use to protect against downgrade attacks is. It leverages the security parameters used during a first successful authorization as a baseline for future ones, unless indicated otherwise. By establishing the minimum required version of the authentication protocol, DOCSIS 4.0 cable modems actively prevent unauthorized use of a weaker version of the DOCSIS authentication framework (BPI+). During the transitioning period for the adoption of the new version of the protocol, cable operators can allow “planned” downgrades – for example, when a node split occurs or when a faulty equipment is replaced and BPI+ V2 is not enabled there. In other words, a successfully validated CMTS can set, on the CM, the allowed minimum version (and other CM-CMTS binding parameters) to be used for subsequent authentications.

Future Work

In this work we provided a short history of DOCSIS security and reviewed the limitations of the current authorization framework. As CMTS functionality moves into the untrusted domain, these limitations could potentially be translated into security threats, especially in new distributed architectures like Remote PHY. Although in their final stage of approval, the proposed changes to the DOCSIS 4.0 are currently being addressed in the Security Working Group.

Member organizations and DOCSIS equipment vendors are always encouraged to participate in our DOCSIS working groups – if you qualify, please contact us and participate in our weekly DOCSIS 4.0 security meeting where these, and other security-related topics, are addressed.

If you are interested in discovering even more details about DOCSIS 4.0 and 10G security, please feel free to contact us to receive more in-depth and updated information.